饮料类别识别任务的意义在于帮助人们更快速地识别和区分不同类型的饮料,从而提高消费者的购物体验和满意度。对于商家而言,饮料类别识别可以帮助他们更好地管理库存、优化货架布局和预测销售趋势,从而提高运营效率和利润。此外,饮料类别识别还可以应用于自动贩卖机、智能购物系统等领域,为人们提供更便捷的购物服务。总的来说,饮料类别识别任务的意义在于提升消费者和商家的体验,促进商业领域的发展。

本文以YOLOv12为基础,设计研究了基于YOLOv12的饮料类别识别任务,提取饮料类别自动检测,包含完整数据介绍、训练过程和测试结果全流程。

目录

🌷🌷1.数据集介绍

饮料类别识别数据集为特定场景下的15种常见饮料(

0: AD AD钙奶

1: Wangzai 旺仔牛奶

2: Wanglaoji 王老吉

3: Qingdao 青岛啤酒

4: Xuebi 雪碧

5: Qixi 七喜

6: Weita 维他

7: Dongpeng 东鹏特饮

8: Baishi 百事

9: Hongniu 红牛

10: Xingbake 星巴克

11: Yongchuangtianya 勇闯天涯

12: Qingshuang 清爽啤酒

13: Fenda_Orange 橘色芬达

14: Meinianda_Orange 橘色美年达

15: Meinianda_Green 绿色美年达

部分影像展示如下:

label为txt格式的yolo目标检测格式,示例txt文件内容为:

训练验证比例可以自行调整,这里不赘述。

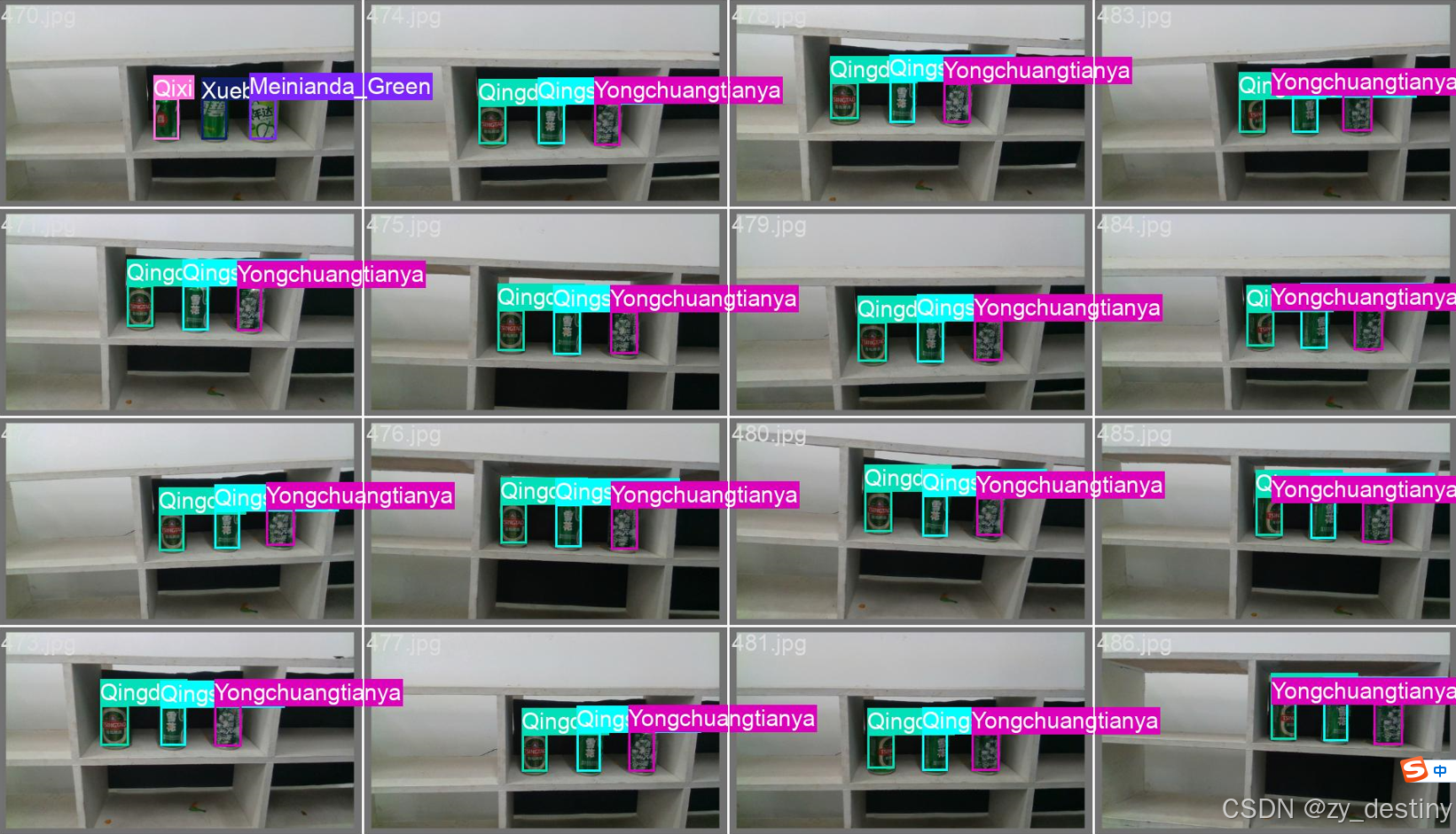

👍👍2.饮料类别识别检测实现效果

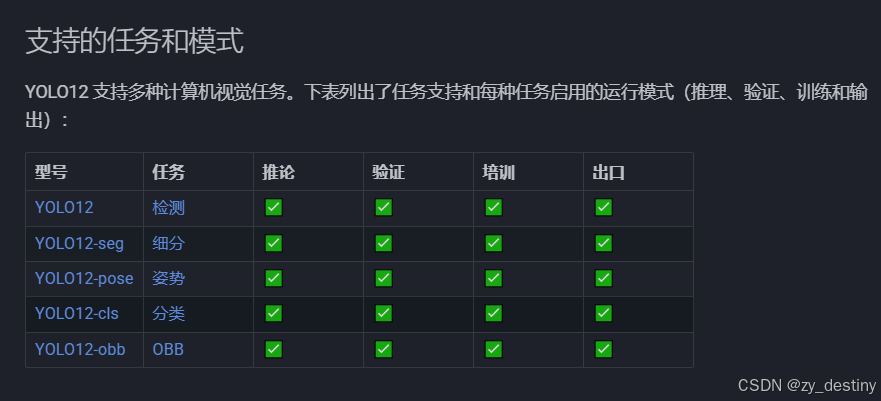

🍎🍎3.YOLOv12识别饮料包装类别算法步骤

通过目标检测方法进行饮料类别识别的方法不限,本文以YOLOv12为例进行说明。

🍋3.1数据准备

数据组织:

----yinliao_dataset

----images

----train

----val

----labels

----train

----val

image/train文件夹下内容如下:

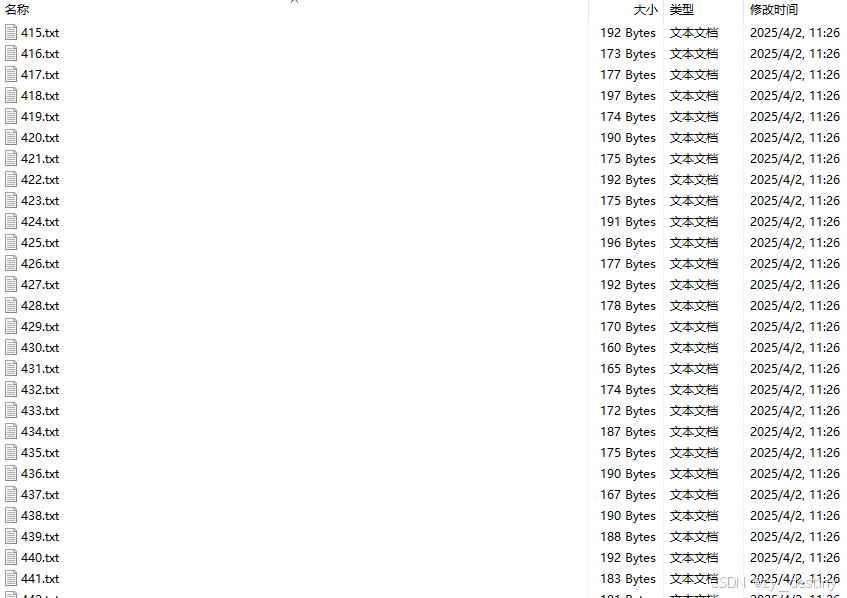

labels/train文件夹下内容如下:

模型训练label部分采用的是YOLO格式的txt文件,所以如果自己的数据集是xml格式或者json格式需要进行转换哦,转换可移步这里。

具体txt格式内容如1.数据集介绍中所示。

🍋3.2模型选择

以YOLOv812为例,模型选择代码如下:

from ultralytics import YOLO

# Load a model

model = YOLO('yolov12n.yaml') # build a new model from YAML

model = YOLO('yolov12n.pt') # load a pretrained model (recommended for training)

model = YOLO('yolov12n.yaml').load('yolov12n.pt') # build from YAML and transfer weights

其中yolov12n.yaml为./ultralytics/cfg/models/v12/yolov12n.yaml,可根据自己的数据进行模型调整,打开yolov12n.yaml显示内容如下:

# YOLOv12 🚀, AGPL-3.0 license

# YOLOv12 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov12n.yaml' will call yolov12.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 465 layers, 2,603,056 parameters, 2,603,040 gradients, 6.7 GFLOPs

s: [0.50, 0.50, 1024] # summary: 465 layers, 9,285,632 parameters, 9,285,616 gradients, 21.7 GFLOPs

m: [0.50, 1.00, 512] # summary: 501 layers, 20,201,216 parameters, 20,201,200 gradients, 68.1 GFLOPs

l: [1.00, 1.00, 512] # summary: 831 layers, 26,454,880 parameters, 26,454,864 gradients, 89.7 GFLOPs

x: [1.00, 1.50, 512] # summary: 831 layers, 59,216,928 parameters, 59,216,912 gradients, 200.3 GFLOPs

# YOLO12n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 4, A2C2f, [512, True, 4]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 4, A2C2f, [1024, True, 1]] # 8

# YOLO12n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, A2C2f, [512, False, -1]] # 11

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, A2C2f, [256, False, -1]] # 14

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 11], 1, Concat, [1]] # cat head P4

- [-1, 2, A2C2f, [512, False, -1]] # 17

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 8], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 20 (P5/32-large)

- [[14, 17, 20], 1, Detect, [nc]] # Detect(P3, P4, P5)

主要需要修改的地方为nc,也就是num_class,此处数据集类别为16类,所以nc=16。

如果其他的模型参数不变的话,就默认保持原版yolov12,需要改造模型结构的大佬请绕行。

🍋3.3加载预训练模型

加载预训练模型yolov12n.pt,可以在第一次运行时自动下载,如果受到下载速度限制,也可以自行下载好(下载链接),放在对应目录下即可。

🍋3.4输入数据组织

yolov12还是以yolo格式的数据为例,./ultralytics/cfg/datasets/data.yaml的内容示例如下:

# Train/val/test sets as 1) dir: path/to/imgs, 2) file: path/to/imgs.txt, or 3) list: [path/to/imgs1, path/to/imgs2, ..]

path: ../datasets/coco8 # dataset root dir

train: images/train # train images (relative to 'path') 4 images

val: images/val # val images (relative to 'path') 4 images

test: # test images (optional)

# Classes (80 COCO classes)

names:

0: person

1: bicycle

2: car

# ...

77: teddy bear

78: hair drier

79: toothbrush

这个是官方的标准coco数据集,需要换成自己的数据集格式,此处建议根据自己的数据集设置新建一个fall_detect_coco128.yaml文件,放在./ultralytics/cfg/datasets/目录下,最后数据集设置就可以直接用自己的yinliao_detect_coco128.yaml文件了。以我的yinliao_detect_coco128.yaml文件为例:

path: /home/datasets/yinliao# dataset root dir

train: images/train # train images (relative to 'path') 4 images

val: images/val # val images (relative to 'path') 4 images

test: images/test # test images (optional)

names:

0: AD

1: Wangzai

2: Wanglaoji

3: Qingdao

4: Xuebi

5: Qixi

6: Weita

7: Dongpeng

8: Baishi

9: Hongniu

10: Xingbake

11: Yongchuangtianya

12: Qingshuang

13: Fenda_Orange

14: Meinianda_Orange

15: Meinianda_Green

🍭🍭4.目标检测训练代码

准备好数据和模型之后,就可以开始训练了,train.py的内容显示为:

from ultralytics import YOLO

# Load a model

#model = YOLO('yolov12n.yaml') # build a new model from YAML

#model = YOLO('yolov12n.pt') # load a pretrained model (recommended for training)

model = YOLO('yolov12n.yaml').load('yolov12n.pt') # build from YAML and transfer weights

# Train the model

results = model.train(data='yinliao_detect_coco128.yaml', epochs=200, imgsz=640)

通常我会选择在基础YOLO模型上进行transfer微调,不会从头开始训练,如果想自己从头开始,可以自行选择第一种方式。这里建议选择第三种。

⭐4.1训练过程

开始训练之后就会开始打印log文件了。如下图所示:

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

199/200 2.62G 0.3875 0.4138 0.8016 45 640: 100%|██████████| 11/11 [00:01<00:00, 8.74it/s]

Class Images Instances Box(P R mAP50 mAP50-95): 100%|██████████| 6/6 [00:00<00:00, 8.67it/s]

all 176 484 0.993 1 0.995 0.935

Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size

200/200 2.63G 0.3813 0.4127 0.8208 43 640: 100%|██████████| 11/11 [00:01<00:00, 8.83it/s]

Class Images Instances Box(P R mAP50 mAP50-95): 100%|██████████| 6/6 [00:00<00:00, 8.78it/s]

all 176 484 0.993 1 0.995 0.935

200 epochs completed in 0.151 hours.

Optimizer stripped from runs/detect/train5/weights/last.pt, 5.5MB

Optimizer stripped from runs/detect/train5/weights/best.pt, 5.5MB

Validating runs/detect/train5/weights/best.pt...

Ultralytics 8.3.63 🚀 Python-3.11.0 torch-2.6.0+cu124 CUDA:0 (NVIDIA RTX A6000, 45623MiB)

YOLOv12 summary (fused): 352 layers, 2,559,848 parameters, 0 gradients, 6.3 GFLOPs

Class Images Instances Box(P R mAP50 mAP50-95): 100%|██████████| 6/6 [00:01<00:00, 4.51it/s]

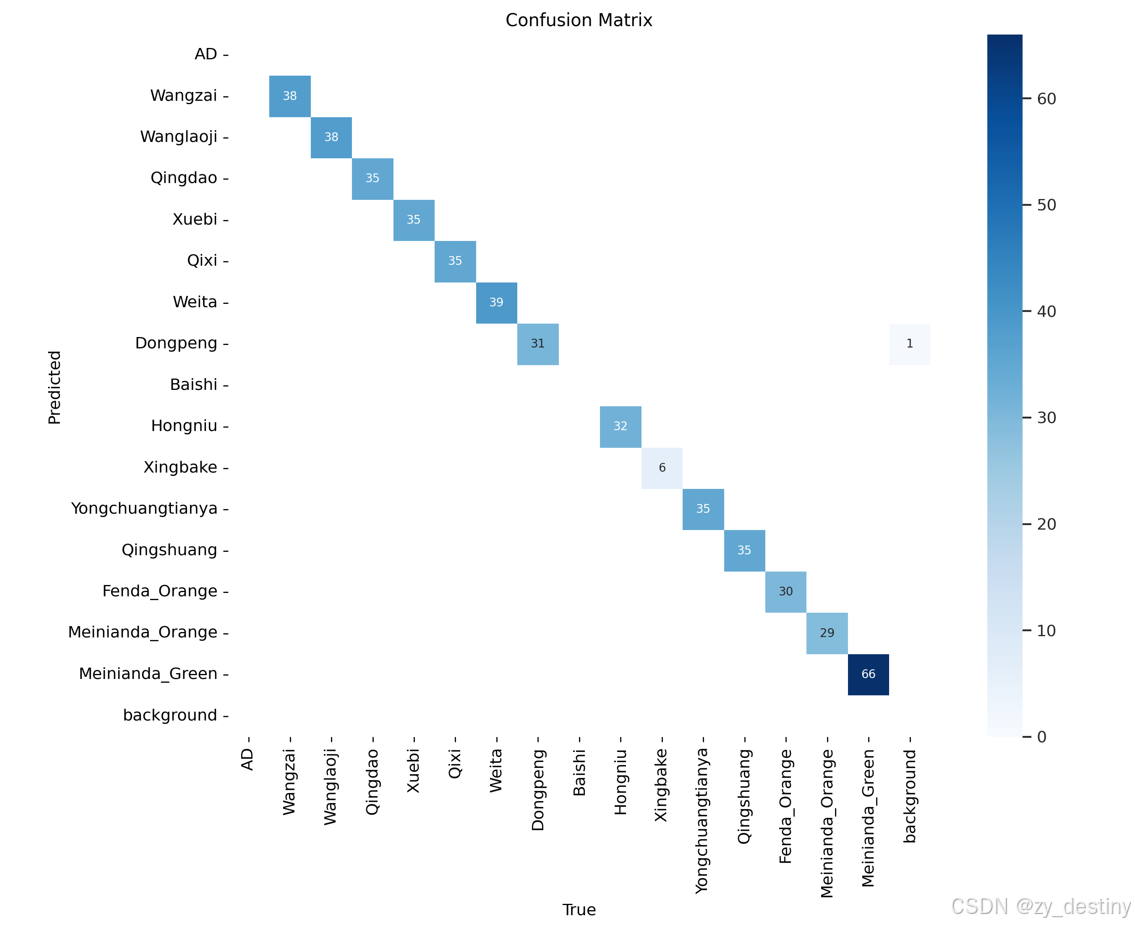

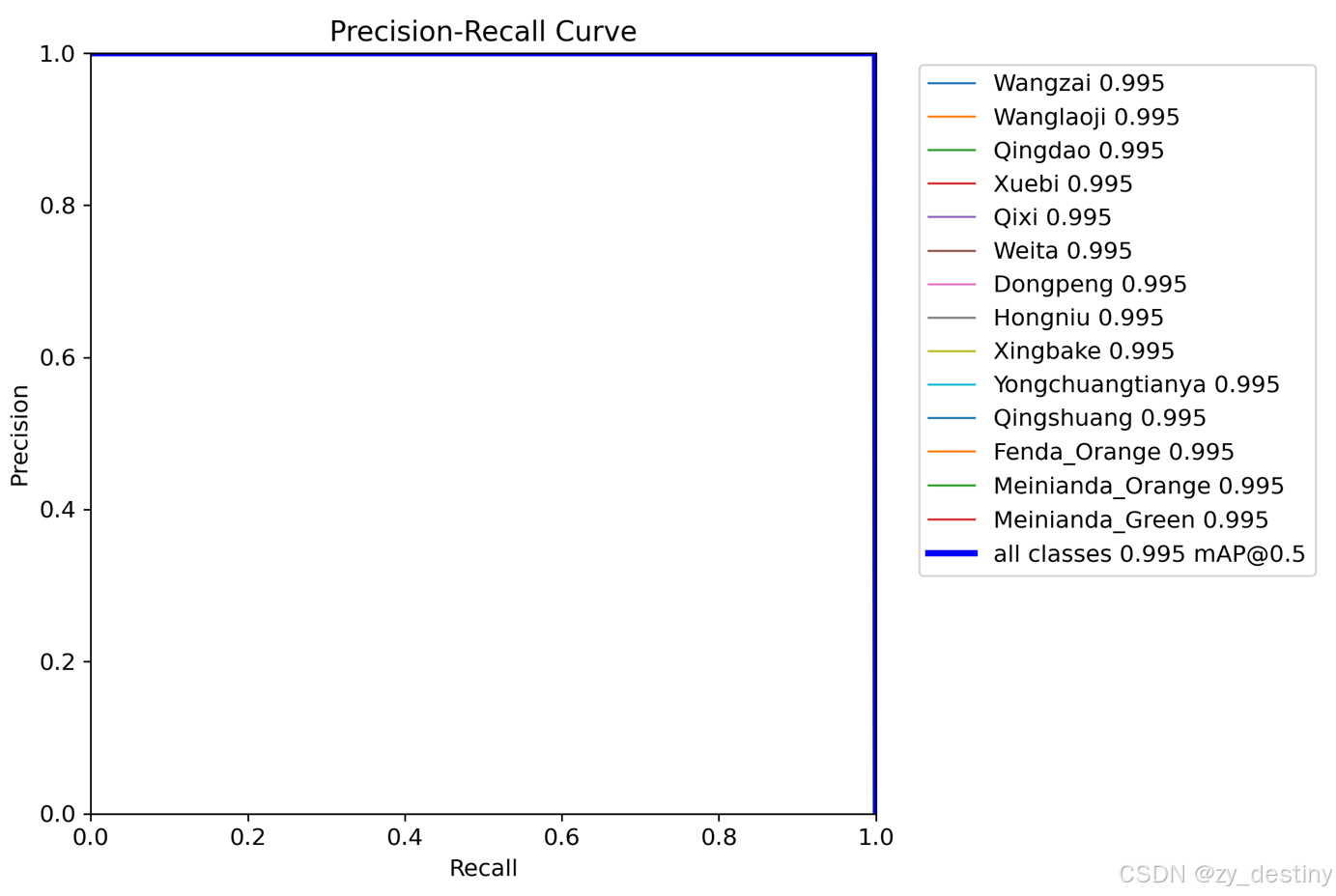

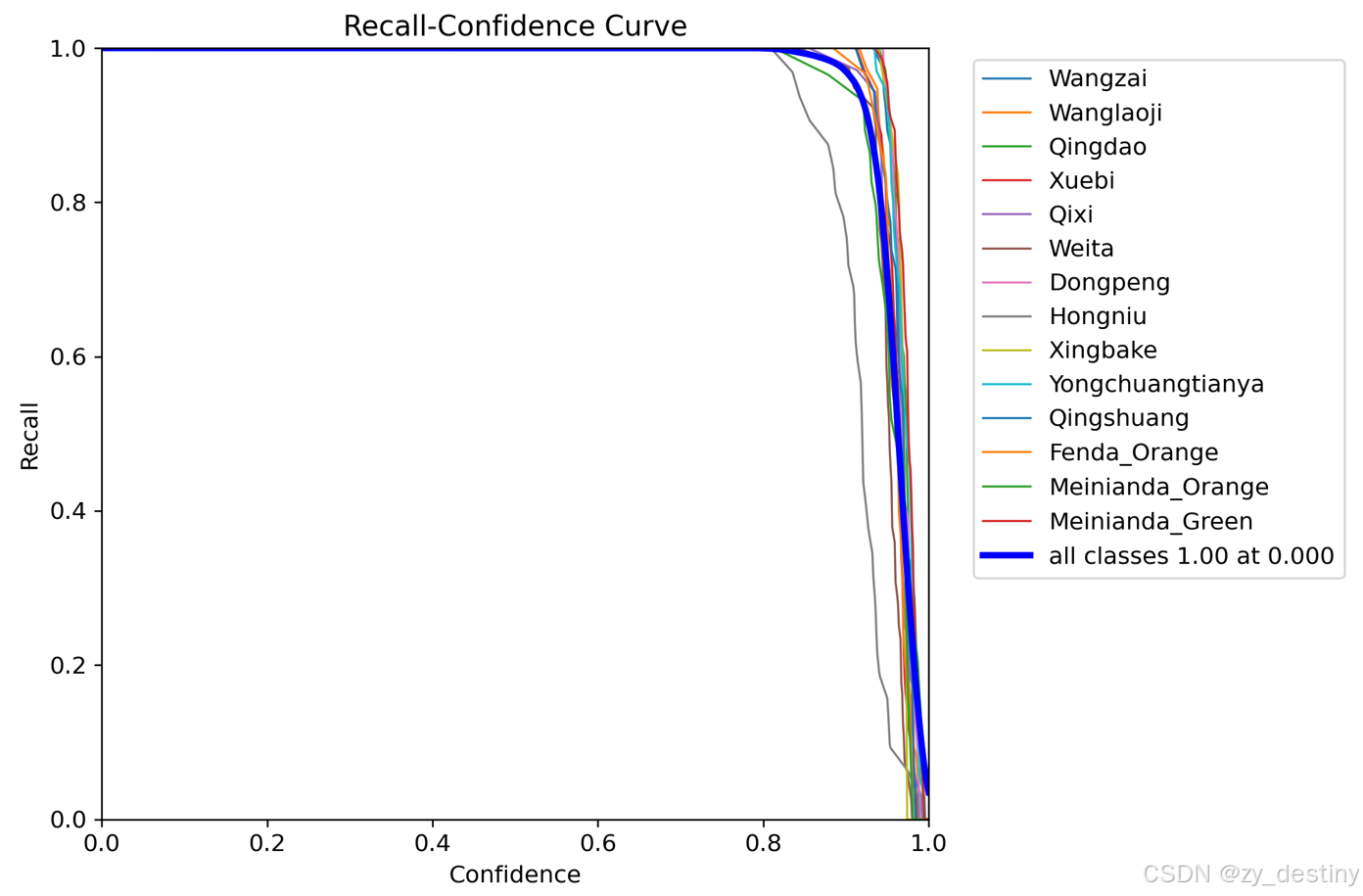

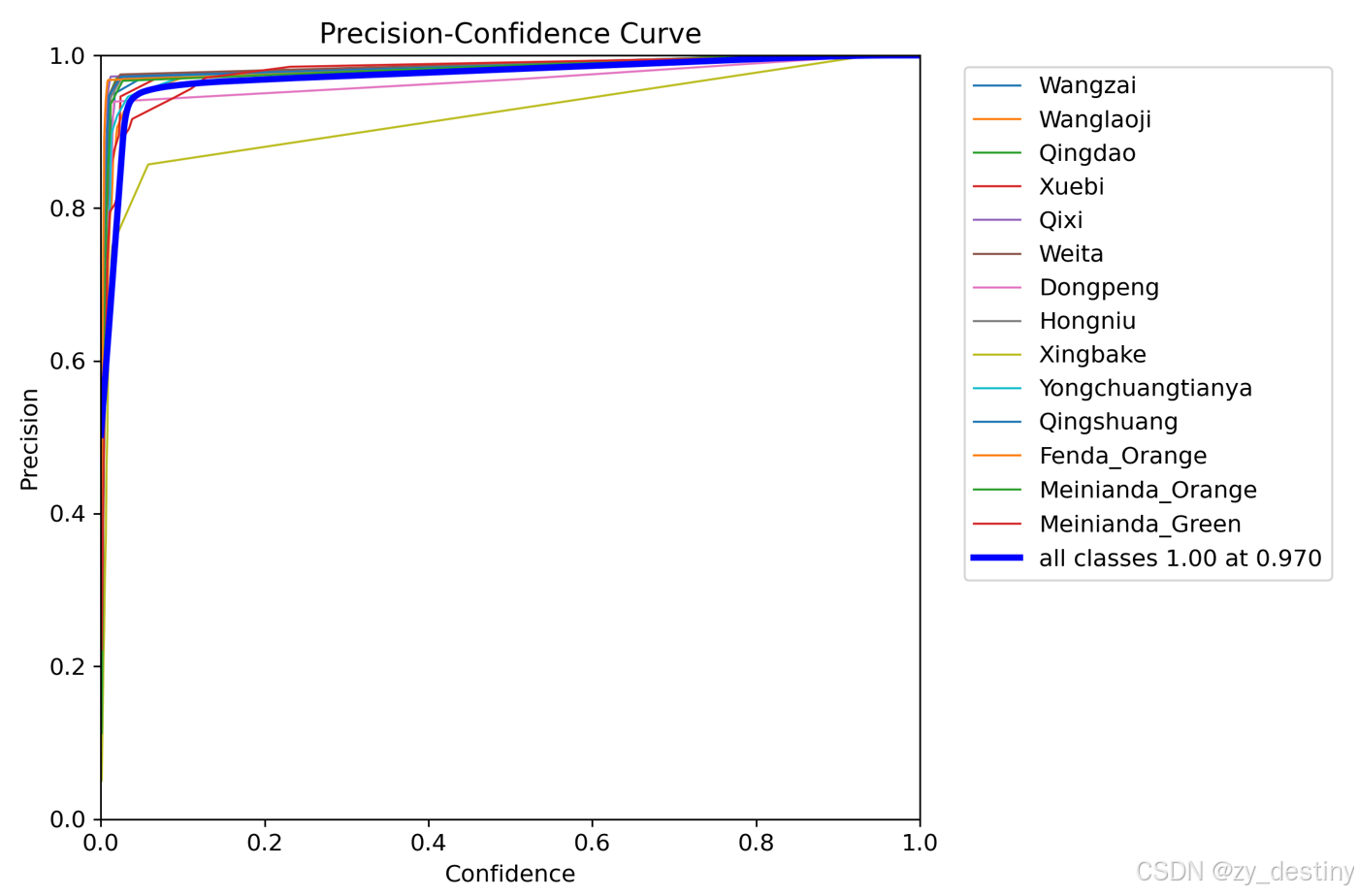

all 176 484 0.995 1 0.995 0.938

Wangzai 38 38 0.996 1 0.995 0.946

Wanglaoji 38 38 0.996 1 0.995 0.961

Qingdao 35 35 0.995 1 0.995 0.972

Xuebi 35 35 0.996 1 0.995 0.936

Qixi 35 35 0.998 1 0.995 0.915

Weita 38 39 0.998 1 0.995 0.904

Dongpeng 31 31 0.989 1 0.995 0.868

Hongniu 32 32 0.999 1 0.995 0.858

Xingbake 6 6 0.976 1 0.995 0.965

Yongchuangtianya 35 35 0.995 1 0.995 0.945

Qingshuang 35 35 0.996 1 0.995 0.966

Fenda_Orange 30 30 0.996 1 0.995 0.946

Meinianda_Orange 29 29 0.999 1 0.995 0.973

Meinianda_Green 65 66 0.997 1 0.995 0.98

Speed: 0.1ms preprocess, 0.9ms inference, 0.0ms loss, 0.7ms postprocess per image

Results saved to runs/detect/train5⭐4.2训练结果

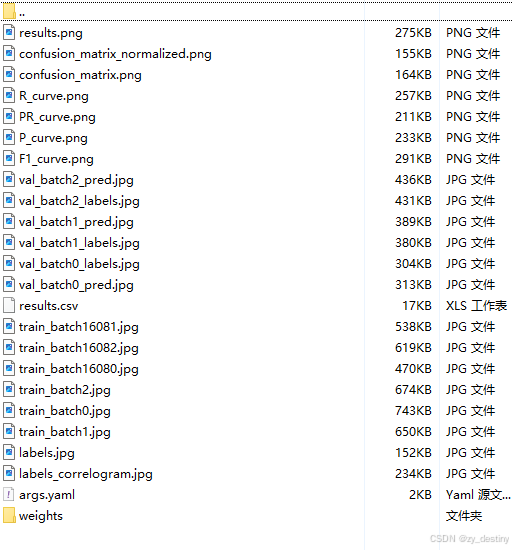

训练完成后的结果如下:

其中weights文件夹内会包含2个模型,一个best.pth,一个last.pth。

至此就可以使用best.pth进行推理检测是否发生火灾了。

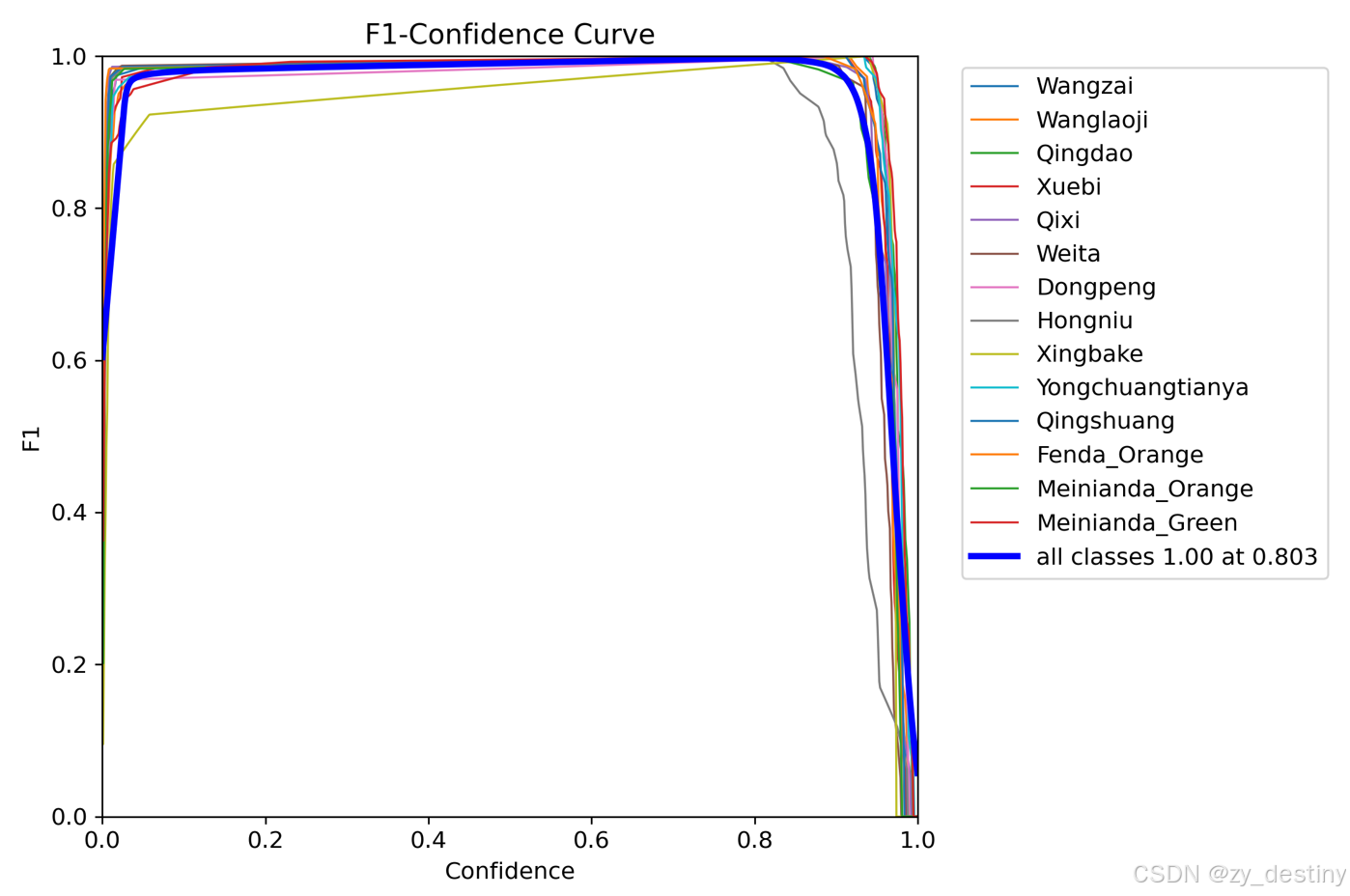

训练精度展示:

可以看到,基本每个类别都能达到99%以上。训练效果还是很不错的。

🏆🏆5.目标检测推理代码

批量推理python代码如下:

from ultralytics import YOLO

from PIL import Image

import cv2

import os

model = YOLO('/yolov12/runs/detect/train4/weights/best.pt') # load a custom model

path = '/home/dataset/yinliao/images/test/' #test_image_path_dir

img_list = os.listdir(path)

for img_path in img_list:

### =============detect=====================

im1 = Image.open(os.path.join(path,img_path))

results = model.predict(source=im1, save=True,save_txt=True)若需要完整数据集和源代码可以私信。

整理不易,欢迎一键三连!!!

送你们一条美丽的--分割线--

🌷🌷🍀🍀🌾🌾🍓🍓🍂🍂🙋🙋🐸🐸🙋🙋💖💖🍌🍌🔔🔔🍉🍉🍭🍭🍋🍋🍇🍇🏆🏆📸📸⛵⛵⭐⭐🍎🍎👍👍🌷🌷