一、下载:

seata下载:点击这里

nacos下载:点击这里

ruoyi(微服务)下载:点击这里

Git bash下载:点击这里

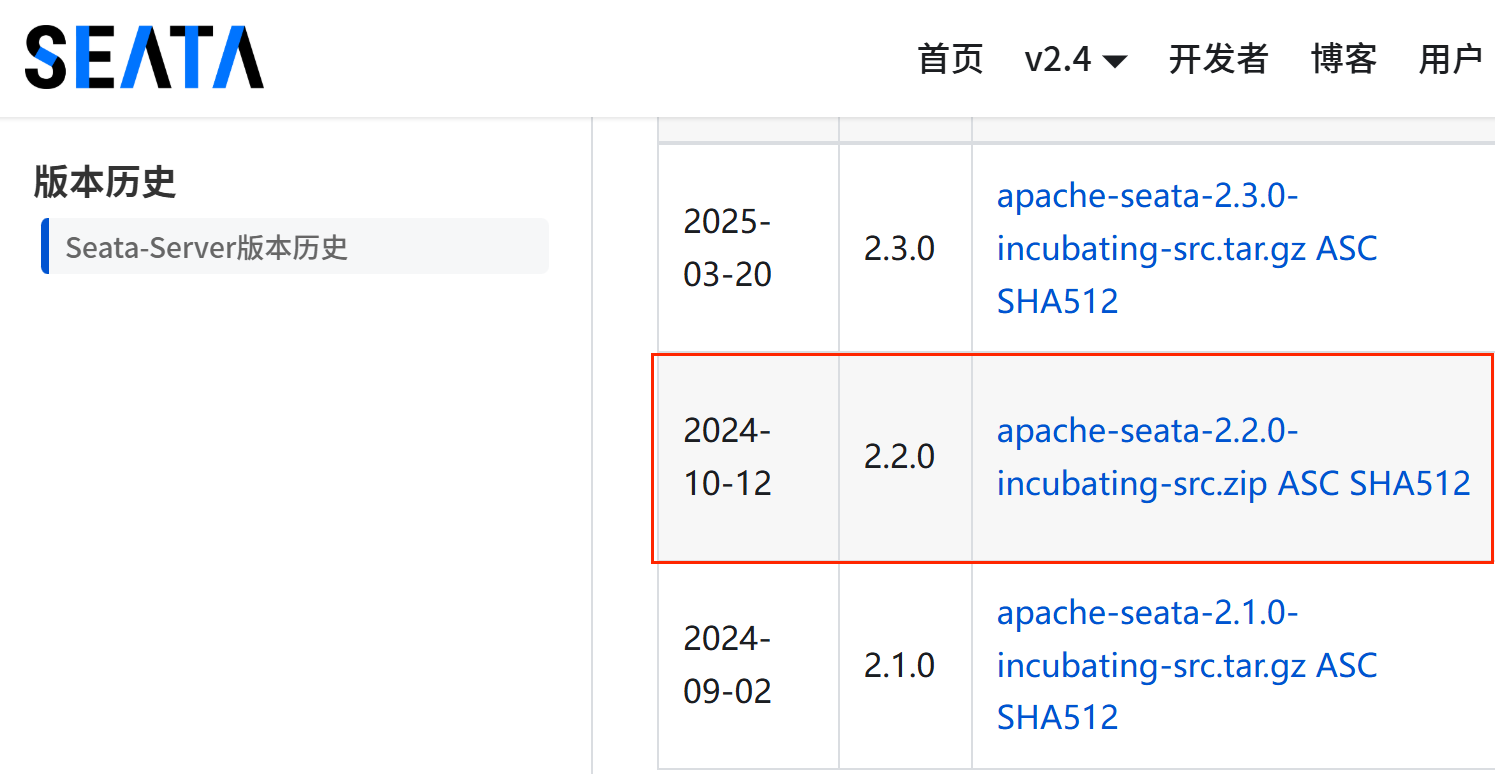

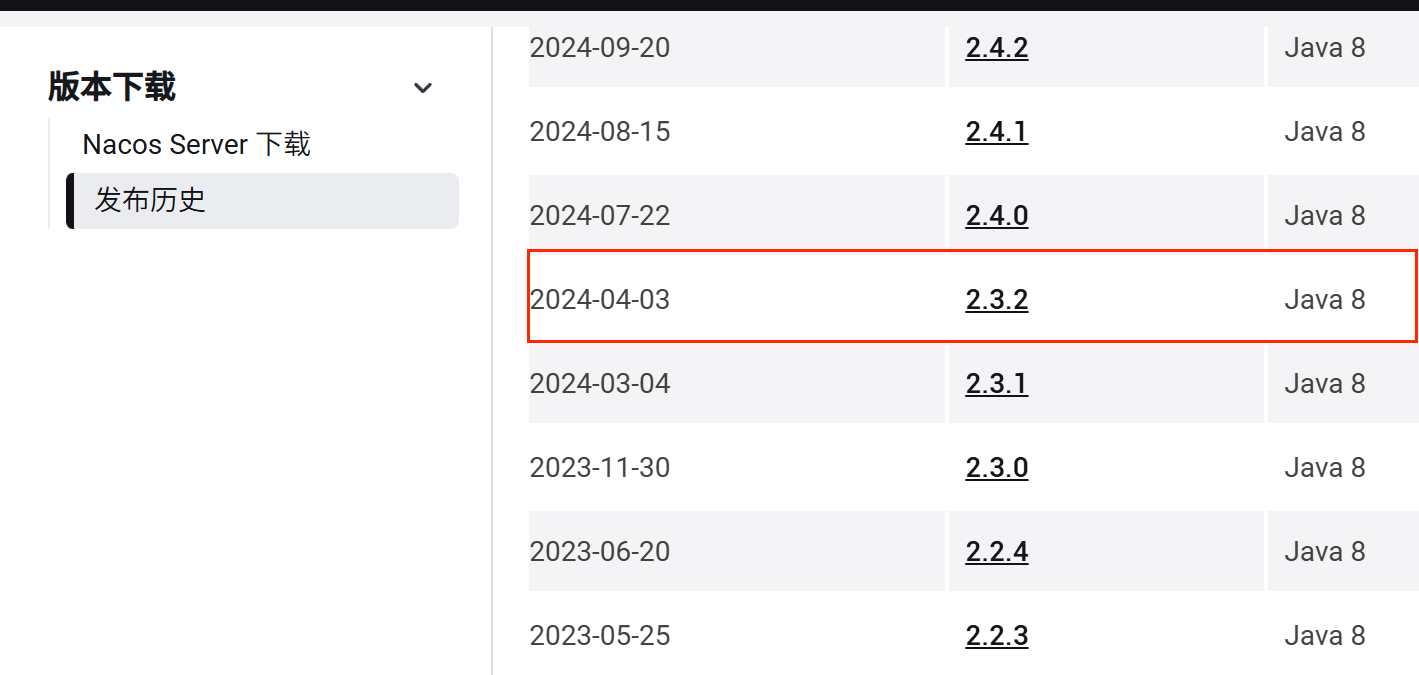

本文所用的版本:

seata-2.2.0(下图红色框框):

nacos-2.3.2(下图红色框框):

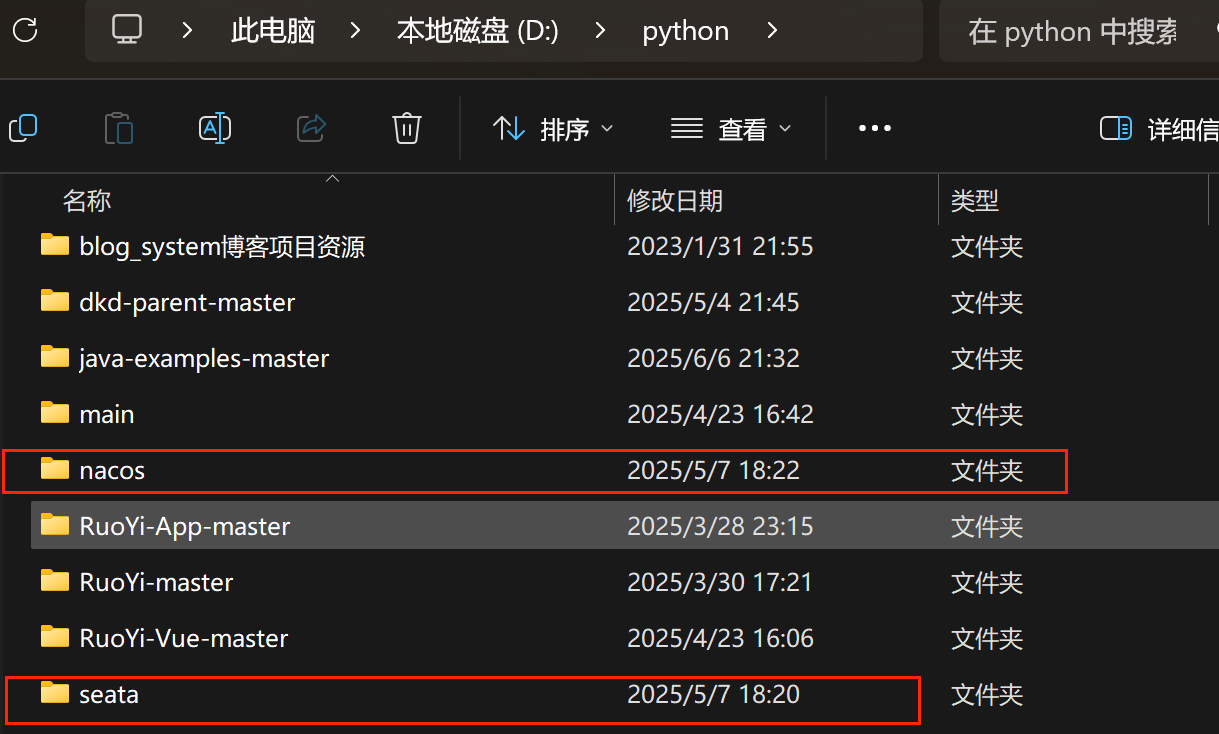

二、解压:

分别解压nacos、seata、ruoyi的压缩包,并放在你的目录下:

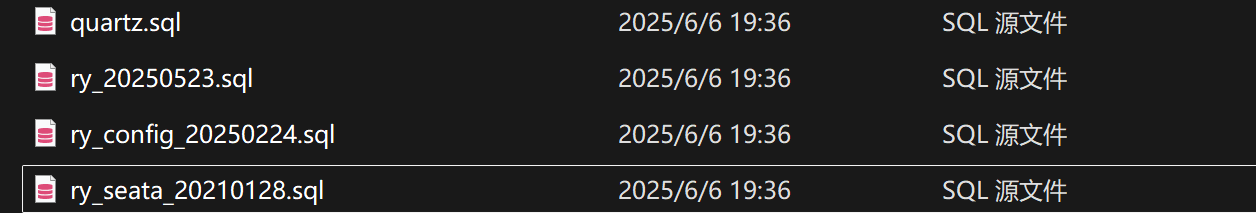

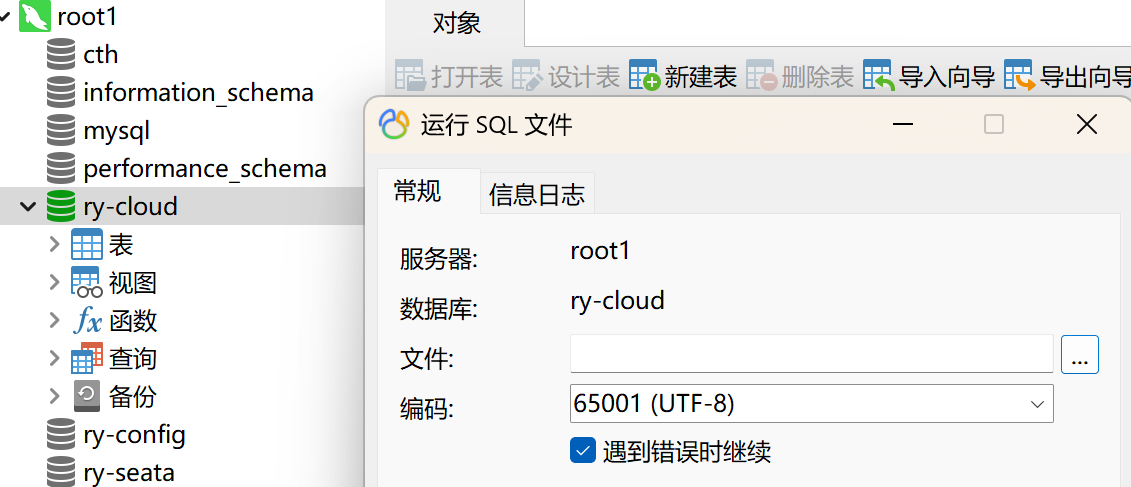

进入RuoYi-Cloud-Master目录下的sql文件夹里,使用mysql工具(这里使用navicat)导入数据,先创建一个数据库ry-cloud,并在ry-cloud数据库里导入该文件夹下的quartz.sql、ry_20250523.sql文件

之后就在根(root)右击运行该目录下的ry_config_20250224.sql、ry_seata_20210128.sql文件即可。

进入nacos目录下的conf文件夹下,打开application.properties文件,并根据自己需要参考同目录下的application.properties.example修改,这里只进行了基础配置:

#

# Copyright 1999-2021 Alibaba Group Holding Ltd.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

#*************** Spring Boot Related Configurations ***************#

### Default web context path:

server.servlet.contextPath=/nacos

### Include message field

server.error.include-message=ALWAYS

### Default web server port:

server.port=8848

#*************** Network Related Configurations ***************#

### If prefer hostname over ip for Nacos server addresses in cluster.conf:

# nacos.inetutils.prefer-hostname-over-ip=false

### Specify local server's IP:

# nacos.inetutils.ip-address=

#*************** Config Module Related Configurations ***************#

### If use MySQL as datasource:

### Deprecated configuration property, it is recommended to use `spring.sql.init.platform` replaced.

# spring.datasource.platform=mysql

# spring.sql.init.platform=mysql

### Count of DB:

# db.num=1

### Connect URL of DB:

# db.url.0=jdbc:mysql://127.0.0.1:3306/ry-config?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC

# db.user.0=nacos

# db.password.0=nacos

### Connection pool configuration: hikariCP

db.pool.config.connectionTimeout=30000

db.pool.config.validationTimeout=10000

db.pool.config.maximumPoolSize=20

db.pool.config.minimumIdle=2

### the maximum retry times for push

nacos.config.push.maxRetryTime=50

#*************** Naming Module Related Configurations ***************#

### If enable data warmup. If set to false, the server would accept request without local data preparation:

# nacos.naming.data.warmup=true

### If enable the instance auto expiration, kind like of health check of instance:

# nacos.naming.expireInstance=true

### Add in 2.0.0

### The interval to clean empty service, unit: milliseconds.

# nacos.naming.clean.empty-service.interval=60000

### The expired time to clean empty service, unit: milliseconds.

# nacos.naming.clean.empty-service.expired-time=60000

### The interval to clean expired metadata, unit: milliseconds.

# nacos.naming.clean.expired-metadata.interval=5000

### The expired time to clean metadata, unit: milliseconds.

# nacos.naming.clean.expired-metadata.expired-time=60000

### The delay time before push task to execute from service changed, unit: milliseconds.

# nacos.naming.push.pushTaskDelay=500

### The timeout for push task execute, unit: milliseconds.

# nacos.naming.push.pushTaskTimeout=5000

### The delay time for retrying failed push task, unit: milliseconds.

# nacos.naming.push.pushTaskRetryDelay=1000

### Since 2.0.3

### The expired time for inactive client, unit: milliseconds.

# nacos.naming.client.expired.time=180000

#*************** CMDB Module Related Configurations ***************#

### The interval to dump external CMDB in seconds:

# nacos.cmdb.dumpTaskInterval=3600

### The interval of polling data change event in seconds:

# nacos.cmdb.eventTaskInterval=10

### The interval of loading labels in seconds:

# nacos.cmdb.labelTaskInterval=300

### If turn on data loading task:

# nacos.cmdb.loadDataAtStart=false

#***********Metrics for tomcat **************************#

server.tomcat.mbeanregistry.enabled=true

#***********Expose prometheus and health **************************#

#management.endpoints.web.exposure.include=prometheus,health

### Metrics for elastic search

management.metrics.export.elastic.enabled=false

#management.metrics.export.elastic.host=http://localhost:9200

### Metrics for influx

management.metrics.export.influx.enabled=false

#management.metrics.export.influx.db=springboot

#management.metrics.export.influx.uri=http://localhost:8086

#management.metrics.export.influx.auto-create-db=true

#management.metrics.export.influx.consistency=one

#management.metrics.export.influx.compressed=true

#*************** Access Log Related Configurations ***************#

### If turn on the access log:

server.tomcat.accesslog.enabled=true

### file name pattern, one file per hour

server.tomcat.accesslog.rotate=true

server.tomcat.accesslog.file-date-format=.yyyy-MM-dd-HH

### The access log pattern:

server.tomcat.accesslog.pattern=%h %l %u %t "%r" %s %b %D %{User-Agent}i %{Request-Source}i

### The directory of access log:

server.tomcat.basedir=file:.

#*************** Access Control Related Configurations ***************#

### If enable spring security, this option is deprecated in 1.2.0:

#spring.security.enabled=false

### The ignore urls of auth

nacos.security.ignore.urls=/,/error,/**/*.css,/**/*.js,/**/*.html,/**/*.map,/**/*.svg,/**/*.png,/**/*.ico,/console-ui/public/**,/v1/auth/**,/v1/console/health/**,/actuator/**,/v1/console/server/**

### The auth system to use, currently only 'nacos' and 'ldap' is supported:

nacos.core.auth.system.type=nacos

### If turn on auth system:

nacos.core.auth.enabled=true

### Turn on/off caching of auth information. By turning on this switch, the update of auth information would have a 15 seconds delay.

nacos.core.auth.caching.enabled=true

### Since 1.4.1, Turn on/off white auth for user-agent: nacos-server, only for upgrade from old version.

nacos.core.auth.enable.userAgentAuthWhite=false

### Since 1.4.1, worked when nacos.core.auth.enabled=true and nacos.core.auth.enable.userAgentAuthWhite=false.

### The two properties is the white list for auth and used by identity the request from other server.

nacos.core.auth.server.identity.key=nacos-admin

nacos.core.auth.server.identity.value=nacos

### worked when nacos.core.auth.system.type=nacos

### The token expiration in seconds:

nacos.core.auth.plugin.nacos.token.cache.enable=false

nacos.core.auth.plugin.nacos.token.expire.seconds=18000

### The default token (Base64 String):

nacos.core.auth.plugin.nacos.token.secret.key=bmFjb3NjdGgxMjM0NTY3ODkxMDM1NDIxMTc3ODNjdGhjdGg=

### worked when nacos.core.auth.system.type=ldap,{0} is Placeholder,replace login username

#nacos.core.auth.ldap.url=ldap://localhost:389

#nacos.core.auth.ldap.basedc=dc=example,dc=org

#nacos.core.auth.ldap.userDn=cn=admin,${nacos.core.auth.ldap.basedc}

#nacos.core.auth.ldap.password=admin

#nacos.core.auth.ldap.userdn=cn={0},dc=example,dc=org

#nacos.core.auth.ldap.filter.prefix=uid

#nacos.core.auth.ldap.case.sensitive=true

#nacos.core.auth.ldap.ignore.partial.result.exception=false

#*************** Control Plugin Related Configurations ***************#

# plugin type

#nacos.plugin.control.manager.type=nacos

# local control rule storage dir, default ${nacos.home}/data/connection and ${nacos.home}/data/tps

#nacos.plugin.control.rule.local.basedir=${nacos.home}

# external control rule storage type, if exist

#nacos.plugin.control.rule.external.storage=

#*************** Config Change Plugin Related Configurations ***************#

# webhook

#nacos.core.config.plugin.webhook.enabled=false

# It is recommended to use EB https://help.aliyun.com/document_detail/413974.html

#nacos.core.config.plugin.webhook.url=http://localhost:8080/webhook/send?token=***

# The content push max capacity ,byte

#nacos.core.config.plugin.webhook.contentMaxCapacity=102400

# whitelist

#nacos.core.config.plugin.whitelist.enabled=false

# The import file suffixs

#nacos.core.config.plugin.whitelist.suffixs=xml,text,properties,yaml,html

# fileformatcheck,which validate the import file of type and content

#nacos.core.config.plugin.fileformatcheck.enabled=false

#*************** Istio Related Configurations ***************#

### If turn on the MCP server:

nacos.istio.mcp.server.enabled=false

#*************** Core Related Configurations ***************#

### set the WorkerID manually

# nacos.core.snowflake.worker-id=

### Member-MetaData

# nacos.core.member.meta.site=

# nacos.core.member.meta.adweight=

# nacos.core.member.meta.weight=

### MemberLookup

### Addressing pattern category, If set, the priority is highest

# nacos.core.member.lookup.type=[file,address-server]

## Set the cluster list with a configuration file or command-line argument

# nacos.member.list=192.168.16.101:8847?raft_port=8807,192.168.16.101?raft_port=8808,192.168.16.101:8849?raft_port=8809

## for AddressServerMemberLookup

# Maximum number of retries to query the address server upon initialization

# nacos.core.address-server.retry=5

## Server domain name address of [address-server] mode

# address.server.domain=jmenv.tbsite.net

## Server port of [address-server] mode

# address.server.port=8080

## Request address of [address-server] mode

# address.server.url=/nacos/serverlist

#*************** JRaft Related Configurations ***************#

### Sets the Raft cluster election timeout, default value is 5 second

# nacos.core.protocol.raft.data.election_timeout_ms=5000

### Sets the amount of time the Raft snapshot will execute periodically, default is 30 minute

# nacos.core.protocol.raft.data.snapshot_interval_secs=30

### raft internal worker threads

# nacos.core.protocol.raft.data.core_thread_num=8

### Number of threads required for raft business request processing

# nacos.core.protocol.raft.data.cli_service_thread_num=4

### raft linear read strategy. Safe linear reads are used by default, that is, the Leader tenure is confirmed by heartbeat

# nacos.core.protocol.raft.data.read_index_type=ReadOnlySafe

### rpc request timeout, default 5 seconds

# nacos.core.protocol.raft.data.rpc_request_timeout_ms=5000

#*************** Distro Related Configurations ***************#

### Distro data sync delay time, when sync task delayed, task will be merged for same data key. Default 1 second.

# nacos.core.protocol.distro.data.sync.delayMs=1000

### Distro data sync timeout for one sync data, default 3 seconds.

# nacos.core.protocol.distro.data.sync.timeoutMs=3000

### Distro data sync retry delay time when sync data failed or timeout, same behavior with delayMs, default 3 seconds.

# nacos.core.protocol.distro.data.sync.retryDelayMs=3000

### Distro data verify interval time, verify synced data whether expired for a interval. Default 5 seconds.

# nacos.core.protocol.distro.data.verify.intervalMs=5000

### Distro data verify timeout for one verify, default 3 seconds.

# nacos.core.protocol.distro.data.verify.timeoutMs=3000

### Distro data load retry delay when load snapshot data failed, default 30 seconds.

# nacos.core.protocol.distro.data.load.retryDelayMs=30000

### enable to support prometheus service discovery

#nacos.prometheus.metrics.enabled=true

### Since 2.3

#*************** Grpc Configurations ***************#

## sdk grpc(between nacos server and client) configuration

## Sets the maximum message size allowed to be received on the server.

#nacos.remote.server.grpc.sdk.max-inbound-message-size=10485760

## Sets the time(milliseconds) without read activity before sending a keepalive ping. The typical default is two hours.

#nacos.remote.server.grpc.sdk.keep-alive-time=7200000

## Sets a time(milliseconds) waiting for read activity after sending a keepalive ping. Defaults to 20 seconds.

#nacos.remote.server.grpc.sdk.keep-alive-timeout=20000

## Sets a time(milliseconds) that specify the most aggressive keep-alive time clients are permitted to configure. The typical default is 5 minutes

#nacos.remote.server.grpc.sdk.permit-keep-alive-time=300000

## cluster grpc(inside the nacos server) configuration

#nacos.remote.server.grpc.cluster.max-inbound-message-size=10485760

## Sets the time(milliseconds) without read activity before sending a keepalive ping. The typical default is two hours.

#nacos.remote.server.grpc.cluster.keep-alive-time=7200000

## Sets a time(milliseconds) waiting for read activity after sending a keepalive ping. Defaults to 20 seconds.

#nacos.remote.server.grpc.cluster.keep-alive-timeout=20000

## Sets a time(milliseconds) that specify the most aggressive keep-alive time clients are permitted to configure. The typical default is 5 minutes

#nacos.remote.server.grpc.cluster.permit-keep-alive-time=300000

## open nacos default console ui

#nacos.console.ui.enabled=true

spring.datasource.platform=mysql

db.num=1

db.url.0=jdbc:mysql://localhost:3306/ry-config?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true

db.user=root

db.password=123456其中的数据库mysql的user、password以及数据库名(这里使用ruoyi的ry-config)需要根据自己的实际修改。

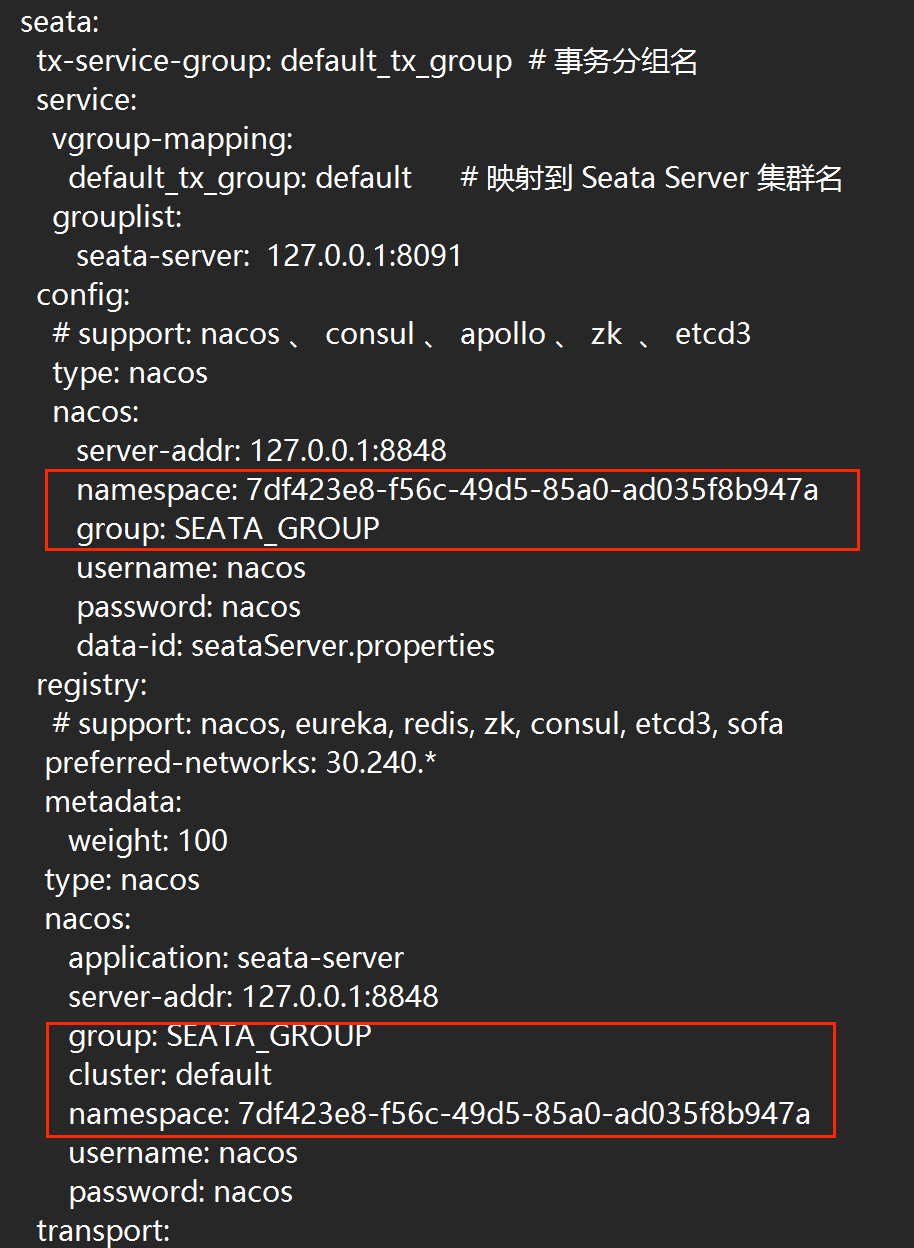

然后进入seata目录下的seata-server的conf文件夹下,打开application.yml,并根据自己需要参考同目录下的application.yml.example修改,这里只进行了基础配置:

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

server:

port: 7091

spring:

application:

name: seata-server

logging:

config: classpath:logback-spring.xml

file:

path: ${log.home:${user.home}/logs/seata}

extend:

logstash-appender:

destination: 127.0.0.1:4560

kafka-appender:

bootstrap-servers: 127.0.0.1:9092

topic: logback_to_logstash

console:

user:

username: seata

password: seata

seata:

tx-service-group: default_tx_group # 事务分组名

service:

vgroup-mapping:

default_tx_group: default # 映射到 Seata Server 集群名

grouplist:

seata-server: 127.0.0.1:8091

config:

# support: nacos 、 consul 、 apollo 、 zk 、 etcd3

type: nacos

nacos:

server-addr: 127.0.0.1:8848

namespace: 7df423e8-f56c-49d5-85a0-ad035f8b947a

group: SEATA_GROUP

username: nacos

password: nacos

data-id: seataServer.properties

registry:

# support: nacos, eureka, redis, zk, consul, etcd3, sofa

preferred-networks: 30.240.*

metadata:

weight: 100

type: nacos

nacos:

application: seata-server

server-addr: 127.0.0.1:8848

group: SEATA_GROUP

cluster: default

namespace: 7df423e8-f56c-49d5-85a0-ad035f8b947a

username: nacos

password: nacos

transport:

type: TCP

server: 127.0.0.1:8091

store:

# support: file 、 db 、 redis 、 raft

mode: db

session:

mode: db

lock:

mode: db

db:

datasource: druid

db-type: mysql

driver-class-name: com.mysql.cj.jdbc.Driver

url: jdbc:mysql://127.0.0.1:3306/ry-seata?rewriteBatchedStatements=true&useSSL=false

user: root

password: 123456

min-conn: 10

max-conn: 100

global-table: global_table

branch-table: branch_table

lock-table: lock_table

distributed-lock-table: distributed_lock

vgroup-table: vgroup_table

query-limit: 1000

max-wait: 5000

# server:

# service-port: 8091 #If not configured, the default is '${server.port} + 1000'

security:

secretKey: SeataSecretKey0c382ef121d778043159209298fd40bf3850a017

tokenValidityInMilliseconds: 1800000

csrf-ignore-urls: /metadata/v1/**

ignore:

urls: /,/**/*.css,/**/*.js,/**/*.html,/**/*.map,/**/*.svg,/**/*.png,/**/*.jpeg,/**/*.ico,/api/v1/auth/login,/version.json,/health,/error,/vgroup/v1/**其中的数据库mysql的user、password以及数据库名(这里使用ruoyi的ry-seata)需要根据自己的实际修改。

三、seata注册到nacos配置:

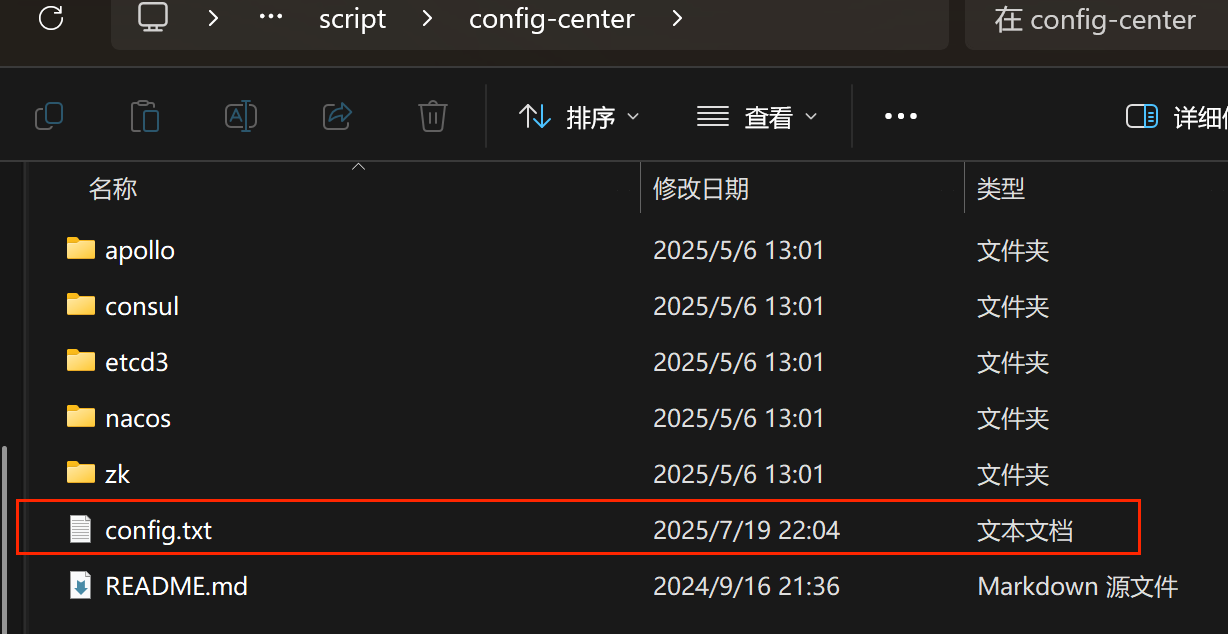

进入seata目录下的seata-server\script\config-centerd文件夹,可以看到里面有一个config.txt文件(如果没有,可以自己创建)

在config.txt文件里输入以下内容(注意自己的mysql的密码和用户名、数据库名ry-seata):

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

#For details about configuration items, see https://seata.apache.org/zh-cn/docs/user/configurations

#Transport configuration, for client and server

transport.type=TCP

transport.server=NIO

transport.heartbeat=true

transport.enableTmClientBatchSendRequest=false

transport.enableRmClientBatchSendRequest=true

transport.enableTcServerBatchSendResponse=false

transport.rpcRmRequestTimeout=30000

transport.rpcTmRequestTimeout=30000

transport.rpcTcRequestTimeout=30000

transport.threadFactory.bossThreadPrefix=NettyBoss

transport.threadFactory.workerThreadPrefix=NettyServerNIOWorker

transport.threadFactory.serverExecutorThreadPrefix=NettyServerBizHandler

transport.threadFactory.shareBossWorker=false

transport.threadFactory.clientSelectorThreadPrefix=NettyClientSelector

transport.threadFactory.clientSelectorThreadSize=1

transport.threadFactory.clientWorkerThreadPrefix=NettyClientWorkerThread

transport.threadFactory.bossThreadSize=1

transport.threadFactory.workerThreadSize=default

transport.shutdown.wait=3

transport.serialization=seata

transport.compressor=none

#Transaction routing rules configuration, only for the client

service.vgroupMapping.default_tx_group=default

#If you use a registry, you can ignore it

service.default.grouplist=127.0.0.1:8091

service.disableGlobalTransaction=false

client.metadataMaxAgeMs=30000

#Transaction rule configuration, only for the client

client.rm.asyncCommitBufferLimit=10000

client.rm.lock.retryInterval=10

client.rm.lock.retryTimes=30

client.rm.lock.retryPolicyBranchRollbackOnConflict=true

client.rm.reportRetryCount=5

client.rm.tableMetaCheckEnable=true

client.rm.tableMetaCheckerInterval=60000

client.rm.sqlParserType=druid

client.rm.reportSuccessEnable=false

client.rm.sagaBranchRegisterEnable=false

client.rm.sagaJsonParser=fastjson

client.rm.tccActionInterceptorOrder=-2147482648

client.rm.sqlParserType=druid

client.tm.commitRetryCount=5

client.tm.rollbackRetryCount=5

client.tm.defaultGlobalTransactionTimeout=60000

client.tm.degradeCheck=false

client.tm.degradeCheckAllowTimes=10

client.tm.degradeCheckPeriod=2000

client.tm.interceptorOrder=-2147482648

client.undo.dataValidation=true

client.undo.logSerialization=jackson

client.undo.onlyCareUpdateColumns=true

server.undo.logSaveDays=7

server.undo.logDeletePeriod=86400000

client.undo.logTable=undo_log

client.undo.compress.enable=true

client.undo.compress.type=zip

client.undo.compress.threshold=64k

#For TCC transaction mode

tcc.fence.logTableName=tcc_fence_log

tcc.fence.cleanPeriod=1h

# You can choose from the following options: fastjson, jackson, gson

tcc.contextJsonParserType=fastjson

#Log rule configuration, for client and server

log.exceptionRate=100

#Transaction storage configuration, only for the server. The file, db, and redis configuration values are optional.

store.mode=db

store.lock.mode=db

store.session.mode=db

#Used for password encryption

store.publicKey=

#If `store.mode,store.lock.mode,store.session.mode` are not equal to `file`, you can remove the configuration block.

store.file.dir=file_store/data

store.file.maxBranchSessionSize=16384

store.file.maxGlobalSessionSize=512

store.file.fileWriteBufferCacheSize=16384

store.file.flushDiskMode=async

store.file.sessionReloadReadSize=100

#These configurations are required if the `store mode` is `db`. If `store.mode,store.lock.mode,store.session.mode` are not equal to `db`, you can remove the configuration block.

store.db.datasource=druid

store.db.dbType=mysql

store.db.driverClassName=com.mysql.cj.jdbc.Driver

store.db.url=jdbc:mysql://127.0.0.1:3306/ry-seata?useUnicode=true&rewriteBatchedStatements=true&useSSL=false

store.db.user=root

store.db.password=123456

store.db.minConn=5

store.db.maxConn=30

store.db.globalTable=global_table

store.db.branchTable=branch_table

store.db.distributedLockTable=distributed_lock

store.db.vgroupTable=vgroup-table

store.db.queryLimit=100

store.db.lockTable=lock_table

store.db.maxWait=5000

#These configurations are required if the `store mode` is `redis`. If `store.mode,store.lock.mode,store.session.mode` are not equal to `redis`, you can remove the configuration block.

store.redis.mode=single

store.redis.type=pipeline

store.redis.single.host=127.0.0.1

store.redis.single.port=6379

store.redis.sentinel.masterName=

store.redis.sentinel.sentinelHosts=

store.redis.sentinel.sentinelPassword=

store.redis.maxConn=10

store.redis.minConn=1

store.redis.maxTotal=100

store.redis.database=0

store.redis.password=

store.redis.queryLimit=100

#Transaction rule configuration, only for the server

server.recovery.committingRetryPeriod=1000

server.recovery.asynCommittingRetryPeriod=1000

server.recovery.rollbackingRetryPeriod=1000

server.recovery.timeoutRetryPeriod=1000

server.maxCommitRetryTimeout=-1

server.maxRollbackRetryTimeout=-1

server.rollbackRetryTimeoutUnlockEnable=false

server.distributedLockExpireTime=10000

server.session.branchAsyncQueueSize=5000

server.session.enableBranchAsyncRemove=false

server.enableParallelRequestHandle=true

server.enableParallelHandleBranch=false

server.applicationDataLimit=64000

server.applicationDataLimitCheck=false

server.raft.server-addr=127.0.0.1:7091,127.0.0.1:7092,127.0.0.1:7093

server.raft.snapshotInterval=600

server.raft.applyBatch=32

server.raft.maxAppendBufferSize=262144

server.raft.maxReplicatorInflightMsgs=256

server.raft.disruptorBufferSize=16384

server.raft.electionTimeoutMs=2000

server.raft.reporterEnabled=false

server.raft.reporterInitialDelay=60

server.raft.serialization=jackson

server.raft.compressor=none

server.raft.sync=true

#Metrics configuration, only for the server

metrics.enabled=true

metrics.registryType=compact

metrics.exporterList=prometheus

metrics.exporterPrometheusPort=9898

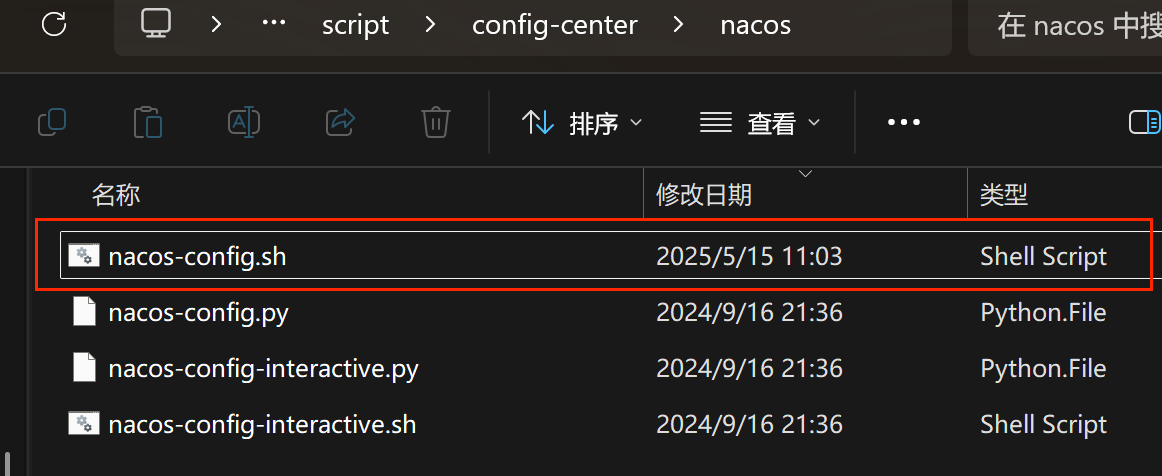

修改完config.txt文件后,进入seata目录下的seata-server\script\config-centerd\nacos文件夹,可以看到nacos-config.sh文件(如果没有,可以自己创建):

如果自己创建后,要在nacos-config.sh里输入以下内容:

#!/bin/sh

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

while getopts ":h:p:g:t:u:w:" opt

do

case $opt in

h)

host=$OPTARG

;;

p)

port=$OPTARG

;;

g)

group=$OPTARG

;;

t)

tenant=$OPTARG

;;

u)

username=$OPTARG

;;

w)

password=$OPTARG

;;

?)

echo " USAGE OPTION: $0 [-h host] [-p port] [-g group] [-t tenant] [-u username] [-w password] "

exit 1

;;

esac

done

if [ -z ${host} ]; then

host=localhost

fi

if [ -z ${port} ]; then

port=8848

fi

if [ -z ${group} ]; then

group="SEATA_GROUP"

fi

if [ -z ${tenant} ]; then

tenant=""

fi

if [ -z ${username} ]; then

username=""

fi

if [ -z ${password} ]; then

password=""

fi

nacosAddr=$host:$port

contentType="content-type:application/json;charset=UTF-8"

echo "set nacosAddr=$nacosAddr"

echo "set group=$group"

urlencode() {

length="${#1}"

i=0

while [ $length -gt $i ]; do

char="${1:$i:1}"

case $char in

[a-zA-Z0-9.~_-]) printf $char ;;

*) printf '%%%02X' "'$char" ;;

esac

i=`expr $i + 1`

done

}

failCount=0

tempLog=$(mktemp -u)

function addConfig() {

dataId=`urlencode $1`

content=`urlencode $2`

curl -X POST -H "${contentType}" "http://$nacosAddr/nacos/v1/cs/configs?dataId=$dataId&group=$group&content=$content&tenant=$tenant&username=$username&password=$password" >"${tempLog}" 2>/dev/null

if [ -z $(cat "${tempLog}") ]; then

echo " Please check the cluster status. "

exit 1

fi

if [ "$(cat "${tempLog}")" == "true" ]; then

echo "Set $1=$2 successfully "

else

echo "Set $1=$2 failure "

failCount=`expr $failCount + 1`

fi

}

count=0

COMMENT_START="#"

for line in $(cat $(dirname "$PWD")/config.txt | sed s/[[:space:]]//g); do

if [[ "$line" =~ ^"${COMMENT_START}".* ]]; then

continue

fi

count=`expr $count + 1`

key=${line%%=*}

value=${line#*=}

addConfig "${key}" "${value}"

done

echo "========================================================================="

echo " Complete initialization parameters, total-count:$count , failure-count:$failCount "

echo "========================================================================="

if [ ${failCount} -eq 0 ]; then

echo " Init nacos config finished, please start seata-server. "

else

echo " init nacos config fail. "

fi

其中$(cat $(dirname "$PWD")/config.txt 这个代码是为了找到config.txt文件的路径,如果和本文章的config.txt位置一样,可以不用改。

四、启动nacos、seata:

启动nacos:

进入nacos的bin文件夹下,按住shift键同时右击鼠标,选择在终端打开,输入命令:

startup.cmd -m standalone 以单机模式启动nacos:

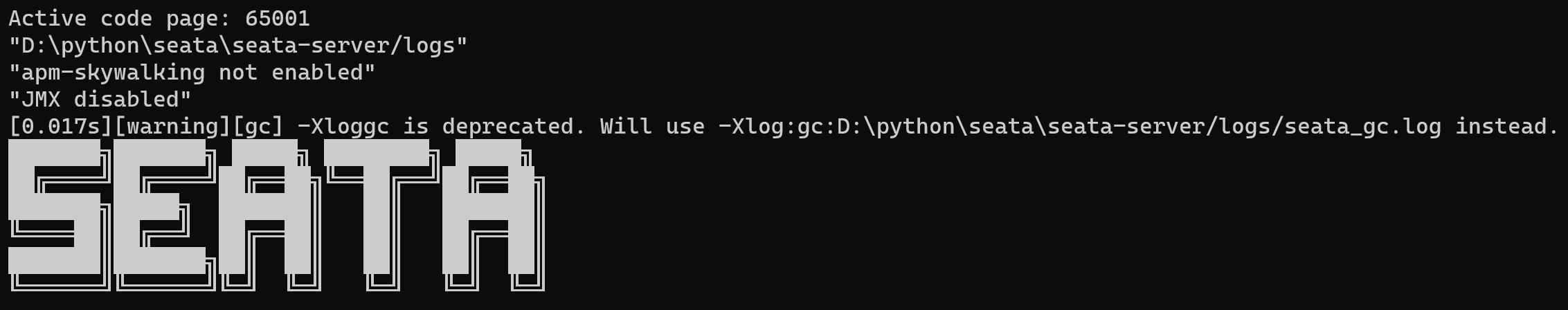

启动seata:

进入seata\seata-server的bin目录下,双击seata-server.bat文件启动seata:

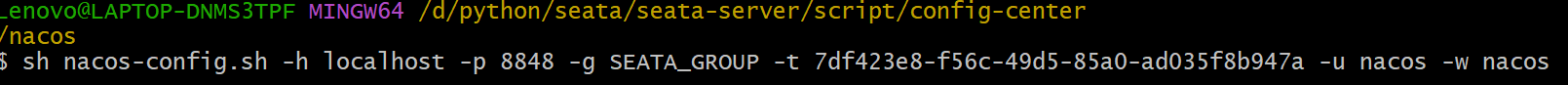

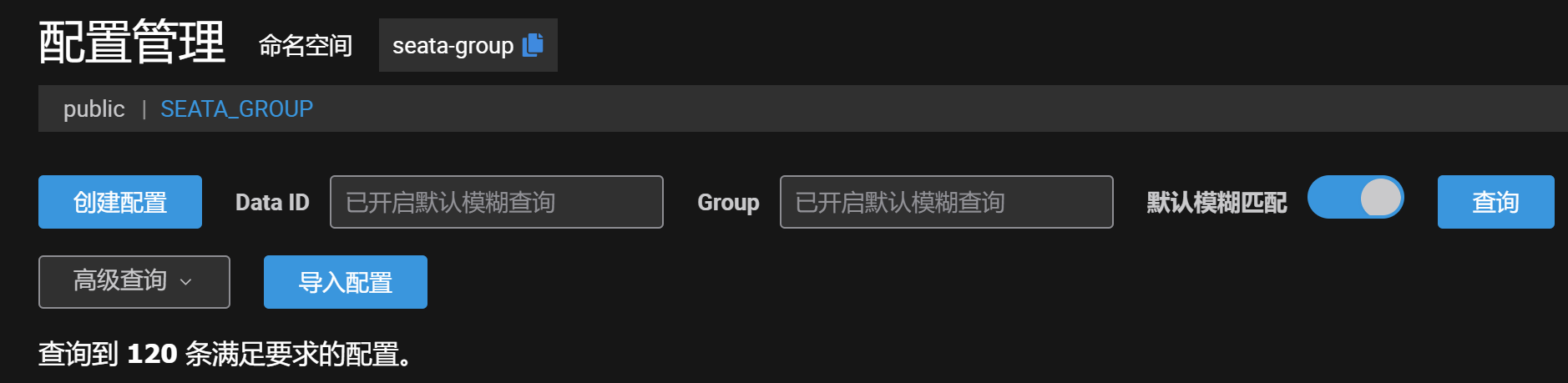

五、将seata注册到nacos:

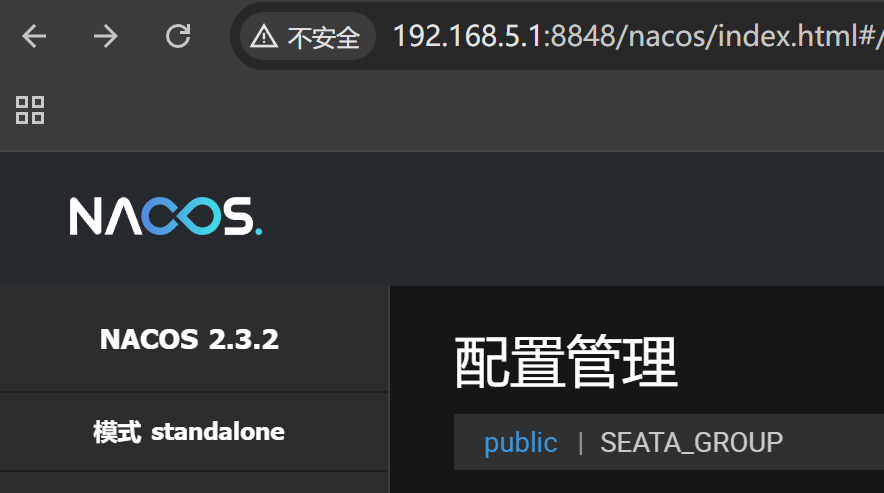

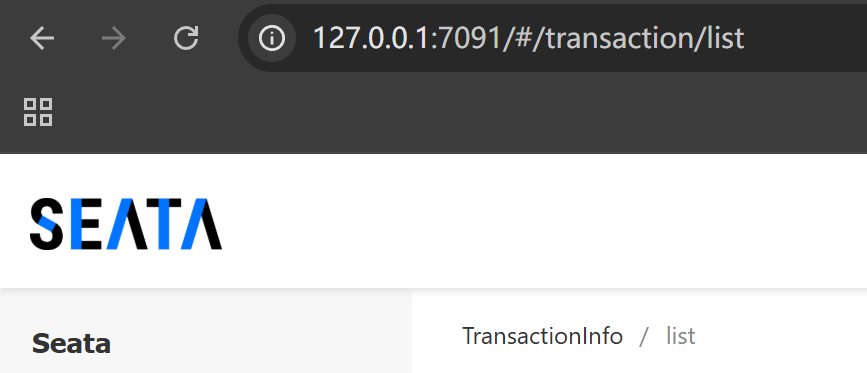

完成上面的步骤后,分别输入http://192.168.5.1:8848/nacos/index.html和http://127.0.0.1:7091/#/transaction/list访问nacos和seata:

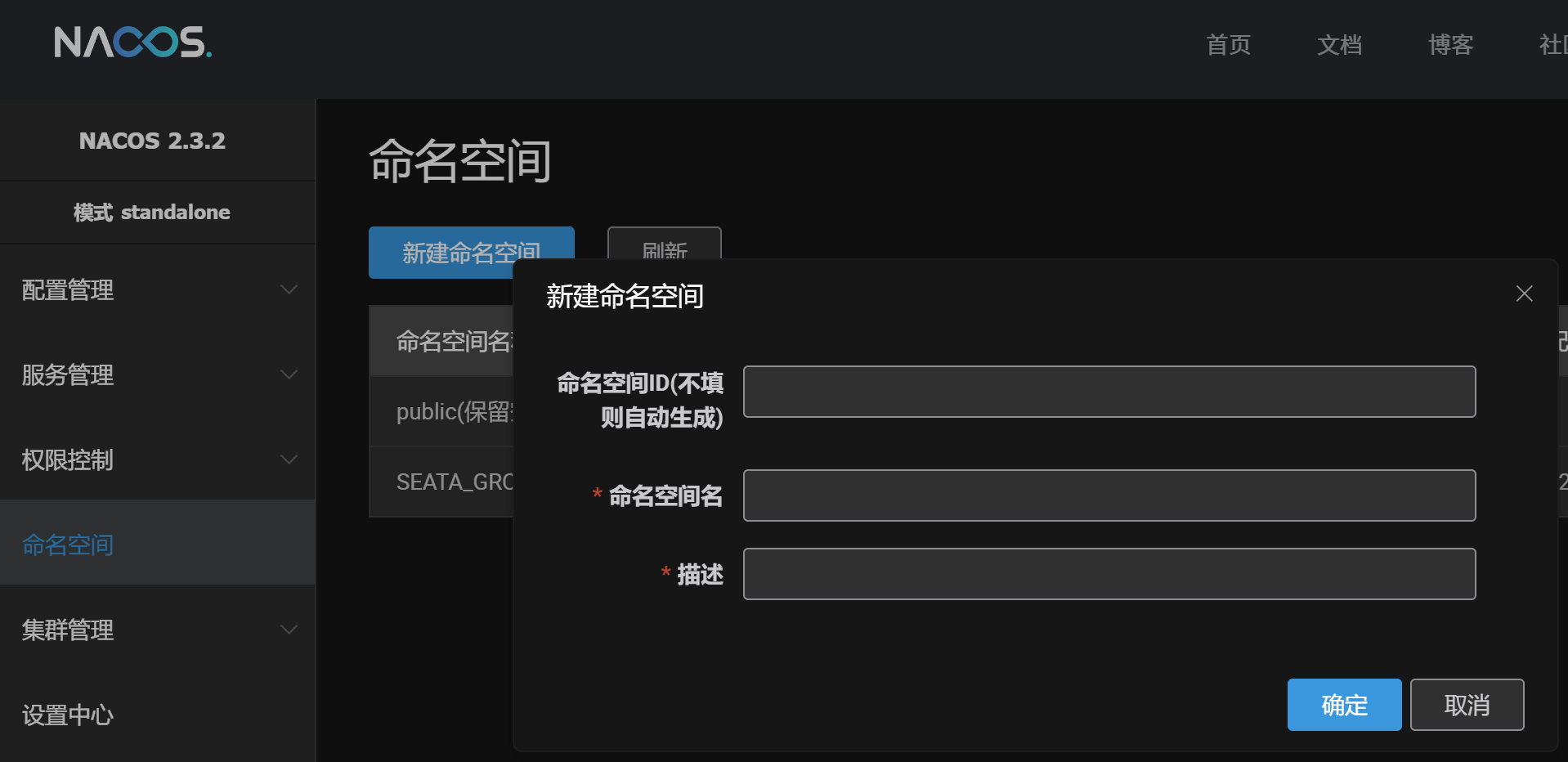

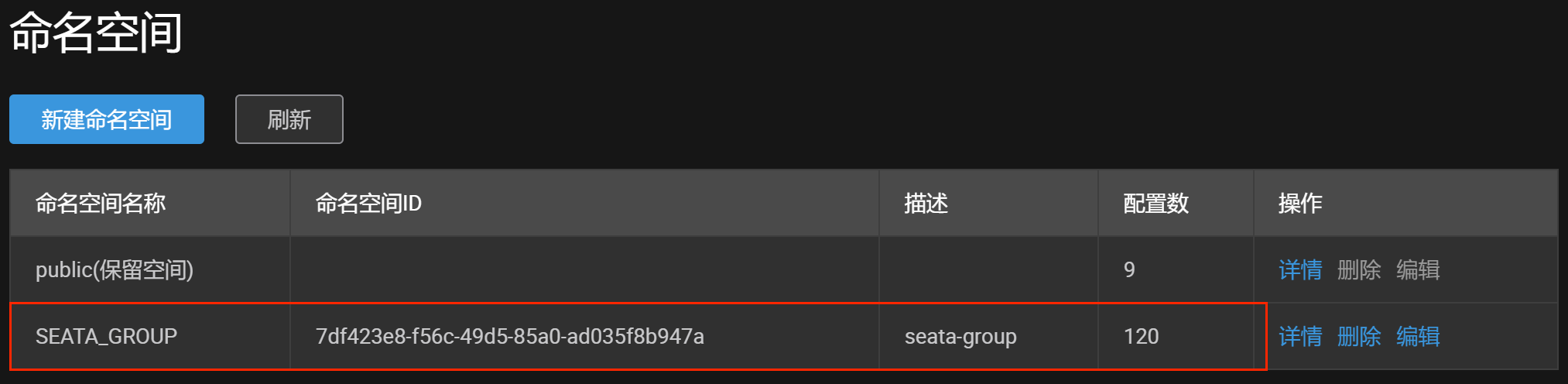

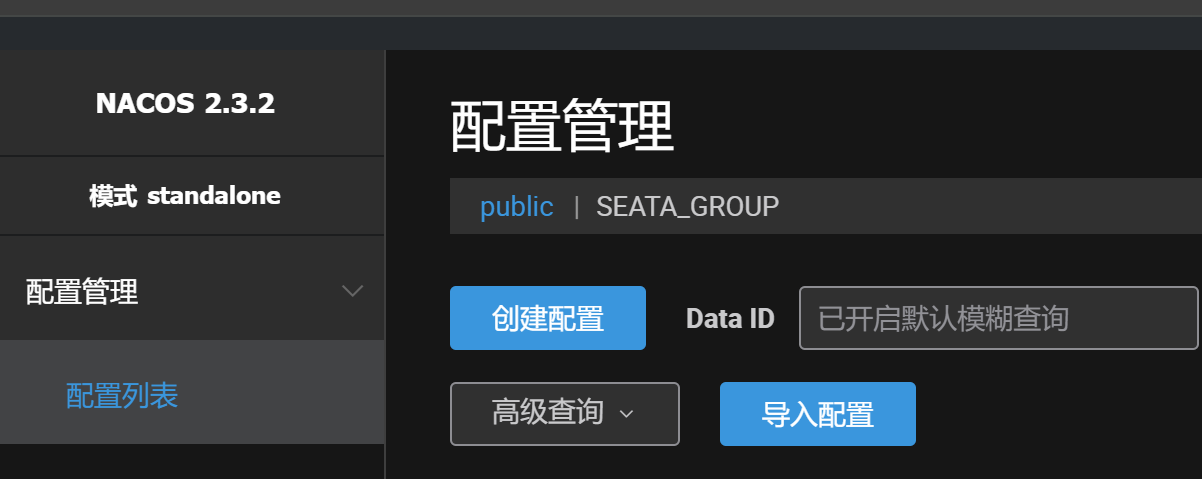

在nacos的命名空间里创建空间,建议命名空间名与seata文件目录下的config.txt以及其配置文件application.yml里面的group一样,同时namespace也要与命名空间的ID一样:

完成上面的配置,安装git bash后,进入\seata\seata-server\script\config-center\nacos目录下按住shift键同时右击鼠标选择Git Bash Here输入命令,将seata配置注册到nacos:

sh nacos-config.sh -h localhost -p 8848 -g SEATA_GROUP -t 命名空间 -u nacos -w nacos

参数说明:

-h:host,默认值localhost

-p:port,默认值8848

-g:配置分组,默认为SEATA_GROUP

-t:租户信息,对应Nacos的命名空间ID,默认为空

有配置数据,说明成功了!

六、配置ruoyi:

使用idea打开ruoyi项目,在每个模块的resources目录下找到bootstrap.yml文件,并作出修改:

nacos:

discovery:

# 服务注册地址

server-addr: 127.0.0.1:8848

username: nacos #添加用户名

password: nacos #添加密码

config:

# 配置中心地址

server-addr: 127.0.0.1:8848

username: nacos #添加用户名

password: nacos #添加密码打开nacos网页,进入到配置管理的public配置:

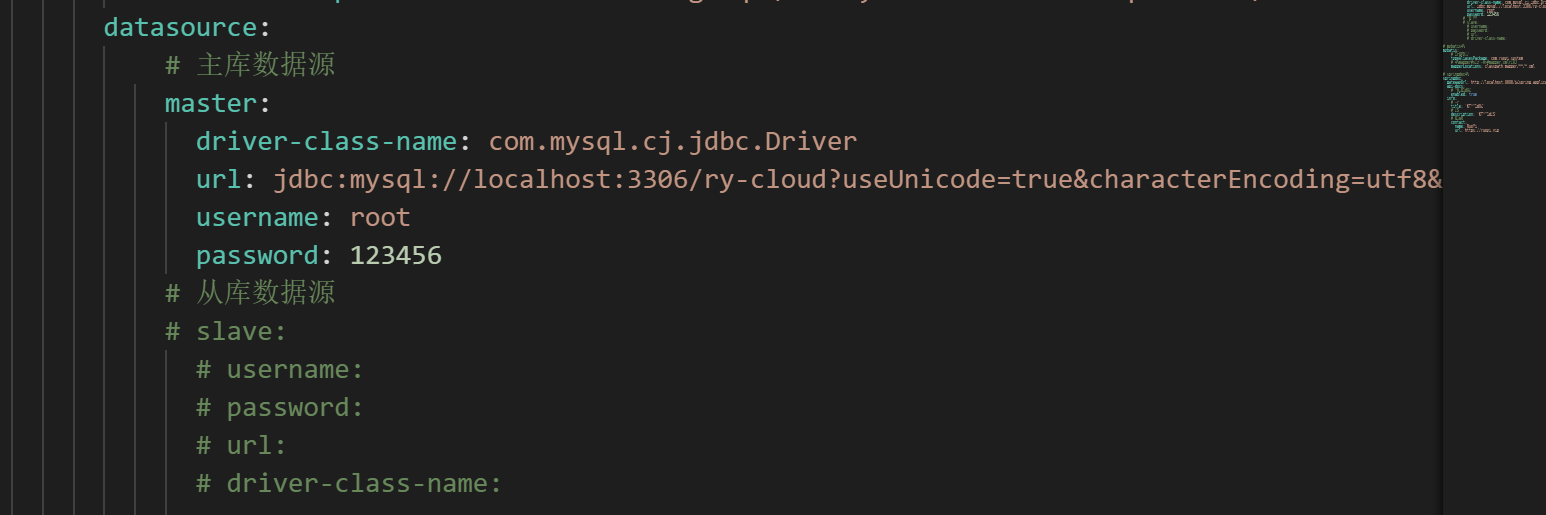

修改下面的每一个配置中需要修改的mysql密码(示例如图):

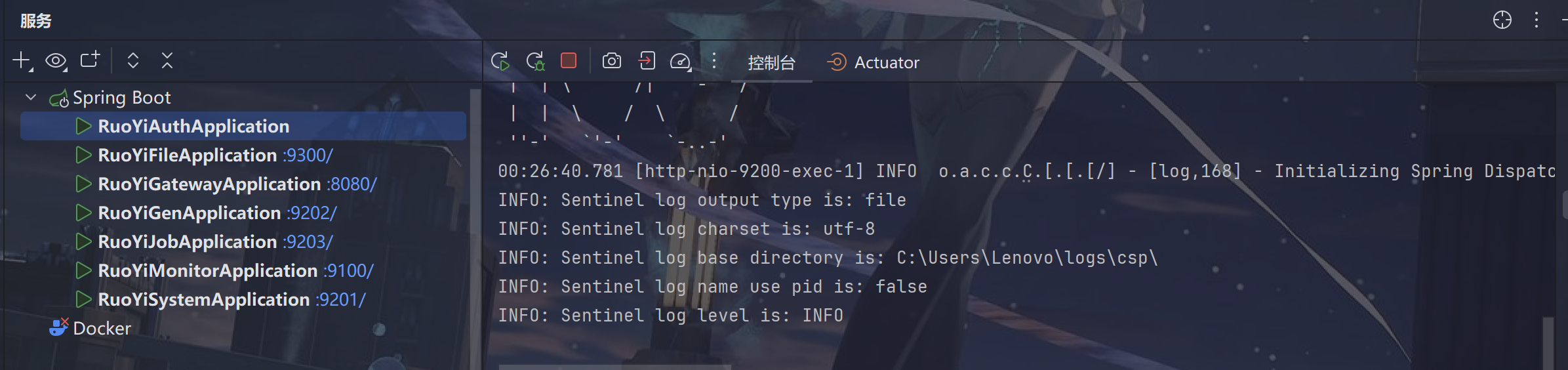

修改之后,启动redis,启动ruoyi项目:

文章到此结束!