秋招面试专栏推荐 :深度学习算法工程师面试问题总结【百面算法工程师】——点击即可跳转

💡💡💡本专栏所有程序均经过测试,可成功执行💡💡💡

专栏目录 :《YOLOv8改进有效涨点》专栏介绍 & 专栏目录 | 目前已有80+篇内容,内含各种Head检测头、损失函数Loss、Backbone、Neck、NMS等创新点改进——点击即可跳转

目前虽然复杂网络的性能很好,但它们日益增加的复杂性给部署带来了挑战。例如,ResNets中的shortcut操作在合并不同层的特征时耗费了大量的off-chip memory traffic。再比如AS-MLP中的axial shift操作以及Swin Transformer中的shift window self-attention操作都需要复杂的工程实现,包括重写CUDA代码。本文介绍的VanillaNet,一种新的神经网络架构,有着简单而优雅的设计,同时在视觉任务中保持了显著的性能。VanillaNet通过舍弃过多的深度、shortcut以及self-attention等复杂的操作,解决了复杂度的问题,非常适合资源有限的环境。文章在介绍主要的原理后,将手把手教学如何进行模块的代码添加和修改,并将修改后的完整代码放在文章的最后,方便大家一键运行,小白也可轻松上手实践。以帮助您更好地学习深度学习目标检测YOLO系列的挑战。

专栏地址:YOLOv8改进——更新各种有效涨点方法——点击即可跳转 订阅专栏学习不迷路

目录

1. 原理

论文地址:VanillaNet: the Power of Minimalism in Deep Learning——点击即可跳转

官方代码: 官方代码仓库——点击即可跳转

VanillaNet:主要原则

VanillaNet 是一种神经网络架构,其设计非常注重简单性和极简主义。以下是对其核心原则和设计理念的详细解释,不包括实验细节:

动机和理念

简单胜过复杂:传统的深度学习模型变得越来越复杂,具有复杂的操作层和深度架构。VanillaNet 旨在通过避免过度深度、捷径和自我注意等复杂操作来简化这一点。

极简主义设计:该架构采用极简主义,专注于紧凑而直接的层,使其更适合在资源受限的环境中部署。

主要架构特征

层结构:VanillaNet 由非常有限数量的卷积层组成。例如,VanillaNet-6 只有六个卷积层。

阶段设计:网络分为多个阶段,其中输入特征的大小被下采样,通道数量加倍。这种设计灵感来自 AlexNet 和 VGGNet 等经典神经网络。

无捷径:与 ResNet 等架构不同,VanillaNet 不使用捷径连接,从而简化了设计并减少了内存消耗。

非线性激活函数:最初,VanillaNet 层包括非线性激活函数,这些函数在训练后会被修剪以返回到更简单的线性形式。

训练技术

深度训练策略:VanillaNet 采用独特的训练策略,从包含激活函数的更深层开始,随着训练的进行,这些激活函数逐渐减少为恒等映射。这使得卷积层更容易合并并保持推理速度。

基于序列的激活函数:为了增强非线性,VanillaNet 使用基于序列的激活函数,该函数结合了多个可学习的仿射变换。这种方法显著提高了网络的非线性能力,而不会增加复杂性。

性能和效率

紧凑高效:尽管 VanillaNet 采用了极简主义方法,但其性能却可与 ResNet 和 Vision Transformers (ViT) 等更复杂的网络相媲美。它证明了简单也可以很强大,为神经网络设计提供了新的视角。

资源优化:精简的架构使 VanillaNet 特别适合计算资源有限的环境,例如移动设备和嵌入式系统。

架构细节

主干块:初始层使用具有步幅的卷积层将输入图像通道(例如 RGB)转换为更多通道。

池化层:最大池化层用于对特征图进行下采样,同时在各个阶段增加通道数量。

最终层:网络以平均池化层结束,然后是用于分类任务的完全连接层。

总结

VanillaNet 重新思考了深度学习模型的设计,将架构精简为基本组件,同时仍能实现高性能。它强调极简主义,结合创新的训练技术,展示了深度学习中更简单但有效的模型的潜力。

2. 将VanillaNet添加到yolov8网络中

2.1 VanillaNet代码实现

关键步骤一: 将下面代码粘贴到在/ultralytics/ultralytics/nn/modules/block.py中,并在该文件的__all__中添加“vanillanet_5,vanillanet_6, vanillanet_7, vanillanet_8, vanillanet_9,vanillanet_10, vanillanet_11,vanillanet_12, vanillanet_13, vanillanet_13_x1_5,vanillanet_13_x1_5_ada_pool,”

#Copyright (C) 2023. Huawei Technologies Co., Ltd. All rights reserved.

#This program is free software; you can redistribute it and/or modify it under the terms of the MIT License.

#This program is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the MIT License for more details.

import torch

import torch.nn as nn

import torch.nn.functional as F

from timm.models.layers import weight_init, DropPath

import numpy as np

__all__ = ['vanillanet_5', 'vanillanet_6', 'vanillanet_7', 'vanillanet_8', 'vanillanet_9', 'vanillanet_10', 'vanillanet_11', 'vanillanet_12', 'vanillanet_13', 'vanillanet_13_x1_5', 'vanillanet_13_x1_5_ada_pool']

class activation(nn.ReLU):

def __init__(self, dim, act_num=3, deploy=False):

super(activation, self).__init__()

self.deploy = deploy

self.weight = torch.nn.Parameter(torch.randn(dim, 1, act_num*2 + 1, act_num*2 + 1))

self.bias = None

self.bn = nn.BatchNorm2d(dim, eps=1e-6)

self.dim = dim

self.act_num = act_num

weight_init.trunc_normal_(self.weight, std=.02)

def forward(self, x):

if self.deploy:

return torch.nn.functional.conv2d(

super(activation, self).forward(x),

self.weight, self.bias, padding=(self.act_num*2 + 1)//2, groups=self.dim)

else:

return self.bn(torch.nn.functional.conv2d(

super(activation, self).forward(x),

self.weight, padding=self.act_num, groups=self.dim))

def _fuse_bn_tensor(self, weight, bn):

kernel = weight

running_mean = bn.running_mean

running_var = bn.running_var

gamma = bn.weight

beta = bn.bias

eps = bn.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta + (0 - running_mean) * gamma / std

def switch_to_deploy(self):

if not self.deploy:

kernel, bias = self._fuse_bn_tensor(self.weight, self.bn)

self.weight.data = kernel

self.bias = torch.nn.Parameter(torch.zeros(self.dim))

self.bias.data = bias

self.__delattr__('bn')

self.deploy = True

class VanillaBlock(nn.Module):

def __init__(self, dim, dim_out, act_num=3, stride=2, deploy=False, ada_pool=None):

super().__init__()

self.act_learn = 1

self.deploy = deploy

if self.deploy:

self.conv = nn.Conv2d(dim, dim_out, kernel_size=1)

else:

self.conv1 = nn.Sequential(

nn.Conv2d(dim, dim, kernel_size=1),

nn.BatchNorm2d(dim, eps=1e-6),

)

self.conv2 = nn.Sequential(

nn.Conv2d(dim, dim_out, kernel_size=1),

nn.BatchNorm2d(dim_out, eps=1e-6)

)

if not ada_pool:

self.pool = nn.Identity() if stride == 1 else nn.MaxPool2d(stride)

else:

self.pool = nn.Identity() if stride == 1 else nn.AdaptiveMaxPool2d((ada_pool, ada_pool))

self.act = activation(dim_out, act_num)

def forward(self, x):

if self.deploy:

x = self.conv(x)

else:

x = self.conv1(x)

x = torch.nn.functional.leaky_relu(x,self.act_learn)

x = self.conv2(x)

x = self.pool(x)

x = self.act(x)

return x

def _fuse_bn_tensor(self, conv, bn):

kernel = conv.weight

bias = conv.bias

running_mean = bn.running_mean

running_var = bn.running_var

gamma = bn.weight

beta = bn.bias

eps = bn.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta + (bias - running_mean) * gamma / std

def switch_to_deploy(self):

if not self.deploy:

kernel, bias = self._fuse_bn_tensor(self.conv1[0], self.conv1[1])

self.conv1[0].weight.data = kernel

self.conv1[0].bias.data = bias

# kernel, bias = self.conv2[0].weight.data, self.conv2[0].bias.data

kernel, bias = self._fuse_bn_tensor(self.conv2[0], self.conv2[1])

self.conv = self.conv2[0]

self.conv.weight.data = torch.matmul(kernel.transpose(1,3), self.conv1[0].weight.data.squeeze(3).squeeze(2)).transpose(1,3)

self.conv.bias.data = bias + (self.conv1[0].bias.data.view(1,-1,1,1)*kernel).sum(3).sum(2).sum(1)

self.__delattr__('conv1')

self.__delattr__('conv2')

self.act.switch_to_deploy()

self.deploy = True

class VanillaNet(nn.Module):

def __init__(self, in_chans=3, num_classes=1000, dims=[96, 192, 384, 768],

drop_rate=0, act_num=3, strides=[2,2,2,1], deploy=False, ada_pool=None, **kwargs):

super().__init__()

self.deploy = deploy

if self.deploy:

self.stem = nn.Sequential(

nn.Conv2d(in_chans, dims[0], kernel_size=4, stride=4),

activation(dims[0], act_num)

)

else:

self.stem1 = nn.Sequential(

nn.Conv2d(in_chans, dims[0], kernel_size=4, stride=4),

nn.BatchNorm2d(dims[0], eps=1e-6),

)

self.stem2 = nn.Sequential(

nn.Conv2d(dims[0], dims[0], kernel_size=1, stride=1),

nn.BatchNorm2d(dims[0], eps=1e-6),

activation(dims[0], act_num)

)

self.act_learn = 1

self.stages = nn.ModuleList()

for i in range(len(strides)):

if not ada_pool:

stage = VanillaBlock(dim=dims[i], dim_out=dims[i+1], act_num=act_num, stride=strides[i], deploy=deploy)

else:

stage = VanillaBlock(dim=dims[i], dim_out=dims[i+1], act_num=act_num, stride=strides[i], deploy=deploy, ada_pool=ada_pool[i])

self.stages.append(stage)

self.depth = len(strides)

self.apply(self._init_weights)

self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

def _init_weights(self, m):

if isinstance(m, (nn.Conv2d, nn.Linear)):

weight_init.trunc_normal_(m.weight, std=.02)

nn.init.constant_(m.bias, 0)

def change_act(self, m):

for i in range(self.depth):

self.stages[i].act_learn = m

self.act_learn = m

def forward(self, x):

res = []

if self.deploy:

x = self.stem(x)

else:

x = self.stem1(x)

x = torch.nn.functional.leaky_relu(x,self.act_learn)

x = self.stem2(x)

res.append(x)

for i in range(self.depth):

x = self.stages[i](x)

res.append(x)

return res

def _fuse_bn_tensor(self, conv, bn):

kernel = conv.weight

bias = conv.bias

running_mean = bn.running_mean

running_var = bn.running_var

gamma = bn.weight

beta = bn.bias

eps = bn.eps

std = (running_var + eps).sqrt()

t = (gamma / std).reshape(-1, 1, 1, 1)

return kernel * t, beta + (bias - running_mean) * gamma / std

def switch_to_deploy(self):

if not self.deploy:

self.stem2[2].switch_to_deploy()

kernel, bias = self._fuse_bn_tensor(self.stem1[0], self.stem1[1])

self.stem1[0].weight.data = kernel

self.stem1[0].bias.data = bias

kernel, bias = self._fuse_bn_tensor(self.stem2[0], self.stem2[1])

self.stem1[0].weight.data = torch.einsum('oi,icjk->ocjk', kernel.squeeze(3).squeeze(2), self.stem1[0].weight.data)

self.stem1[0].bias.data = bias + (self.stem1[0].bias.data.view(1,-1,1,1)*kernel).sum(3).sum(2).sum(1)

self.stem = torch.nn.Sequential(*[self.stem1[0], self.stem2[2]])

self.__delattr__('stem1')

self.__delattr__('stem2')

for i in range(self.depth):

self.stages[i].switch_to_deploy()

self.deploy = True

def update_weight(model_dict, weight_dict):

idx, temp_dict = 0, {}

for k, v in weight_dict.items():

if k in model_dict.keys() and np.shape(model_dict[k]) == np.shape(v):

temp_dict[k] = v

idx += 1

model_dict.update(temp_dict)

print(f'loading weights... {idx}/{len(model_dict)} items')

return model_dict

def vanillanet_5(pretrained='',in_22k=False, **kwargs):

model = VanillaNet(dims=[128//2, 256//2, 512//2, 1024//2], strides=[2,2,2], **kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_6(pretrained='',in_22k=False, **kwargs):

model = VanillaNet(dims=[128*4, 256*4, 512*4, 1024*4, 1024*4], strides=[2,2,2,1], **kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_7(pretrained='',in_22k=False, **kwargs):

model = VanillaNet(dims=[128*4, 128*4, 256*4, 512*4, 1024*4, 1024*4], strides=[1,2,2,2,1], **kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_8(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(dims=[128*4, 128*4, 256*4, 512*4, 512*4, 1024*4, 1024*4], strides=[1,2,2,1,2,1], **kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_9(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(dims=[128*4, 128*4, 256*4, 512*4, 512*4, 512*4, 1024*4, 1024*4], strides=[1,2,2,1,1,2,1], **kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_10(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*4, 128*4, 256*4, 512*4, 512*4, 512*4, 512*4, 1024*4, 1024*4],

strides=[1,2,2,1,1,1,2,1],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_11(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*4, 128*4, 256*4, 512*4, 512*4, 512*4, 512*4, 512*4, 1024*4, 1024*4],

strides=[1,2,2,1,1,1,1,2,1],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_12(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*4, 128*4, 256*4, 512*4, 512*4, 512*4, 512*4, 512*4, 512*4, 1024*4, 1024*4],

strides=[1,2,2,1,1,1,1,1,2,1],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_13(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*4, 128*4, 256*4, 512*4, 512*4, 512*4, 512*4, 512*4, 512*4, 512*4, 1024*4, 1024*4],

strides=[1,2,2,1,1,1,1,1,1,2,1],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_13_x1_5(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*6, 128*6, 256*6, 512*6, 512*6, 512*6, 512*6, 512*6, 512*6, 512*6, 1024*6, 1024*6],

strides=[1,2,2,1,1,1,1,1,1,2,1],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

def vanillanet_13_x1_5_ada_pool(pretrained='', in_22k=False, **kwargs):

model = VanillaNet(

dims=[128*6, 128*6, 256*6, 512*6, 512*6, 512*6, 512*6, 512*6, 512*6, 512*6, 1024*6, 1024*6],

strides=[1,2,2,1,1,1,1,1,1,2,1],

ada_pool=[0,40,20,0,0,0,0,0,0,10,0],

**kwargs)

if pretrained:

weights = torch.load(pretrained)['model_ema']

model.load_state_dict(update_weight(model.state_dict(), weights))

return model

VanillaNet 处理图像的主要流程

VanillaNet 是一种简化的神经网络架构,设计目的是在保持高性能的同时,尽量简化网络结构。以下是 VanillaNet 处理图像的主要流程:

1. 输入预处理

图像输入首先通过一个输入层,该层将图像从原始的 RGB 三通道数据转化为适合卷积操作的多通道特征图。

2. 干层(Stem Block)

卷积操作: 输入图像经过一个 4×4 的卷积层,卷积核个数为 C,步长为 4。这个操作将图像从 3 个通道(RGB)映射到 C 个通道,并进行下采样。

目的: 这个卷积操作的目的是减少图像的空间维度,同时增加通道数,为后续的特征提取做准备。

3. 主体结构(Main Body)

VanillaNet 的主体部分包括四个阶段,每个阶段由一个卷积层和一个池化层组成。具体流程如下:

阶段 1, 2, 3:

卷积层: 每个阶段包含一个 1×1 的卷积层,其目的在于尽量减少计算成本,同时保持特征图的信息。

池化层: 使用最大池化(Max Pooling)层,步长为 2。这个操作减少特征图的空间维度(宽度和高度),并增加通道数。

批量归一化: 每个卷积层后添加批量归一化(Batch Normalization)层,以加速训练过程并稳定训练。

阶段 4:

卷积层: 包含一个 1×1 的卷积层,但这个阶段不增加通道数。

池化层: 使用平均池化(Average Pooling)层,主要用于进一步减少特征图的空间维度,为最后的分类做准备。

4. 非线性激活函数

初始激活: 在每个卷积层后应用激活函数(例如 ReLU),增强网络的非线性能力。

深度训练策略: 在训练过程中,激活函数逐渐被削减为恒等映射(identity mapping),以便于卷积层的合并,同时保持推理速度。

5. 全连接层(Fully Connected Layer)

特征映射: 经过上述各阶段的处理后,最终的特征图通过一个全连接层,输出分类结果。

作用: 全连接层将高维特征映射到具体的分类标签。

2.2 更改init.py文件

关键步骤二:修改modules文件夹下的__init__.py文件,先导入函数

然后在下面的__all__中声明函数

2.3 添加yaml文件

关键步骤三:在/ultralytics/ultralytics/cfg/models/v8下面新建文件yolov8_VanillaNet.yaml文件,粘贴下面的内容

- OD【目标检测】

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, vanillanet_5, []] # 4

- [-1, 1, SPPF, [1024, 5]] # 5

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 6

- [[-1, 3], 1, Concat, [1]] # 7 cat backbone P4

- [-1, 3, C2f, [512]] # 8

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 9

- [[-1, 2], 1, Concat, [1]] # 10 cat backbone P3

- [-1, 3, C2f, [256]] # 11 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]] # 12

- [[-1, 8], 1, Concat, [1]] # 13 cat head P4

- [-1, 3, C2f, [512]] # 14 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]] # 15

- [[-1, 5], 1, Concat, [1]] # 16 cat head P5

- [-1, 3, C2f, [1024]] # 17 (P5/32-large)

- [[11, 14, 17], 1, Detect, [nc]] # Detect(P3, P4, P5)- Seg【语义分割】

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, vanillanet_5, []] # 4

- [-1, 1, SPPF, [1024, 5]] # 5

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 6

- [[-1, 3], 1, Concat, [1]] # 7 cat backbone P4

- [-1, 3, C2f, [512]] # 8

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 9

- [[-1, 2], 1, Concat, [1]] # 10 cat backbone P3

- [-1, 3, C2f, [256]] # 11 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]] # 12

- [[-1, 8], 1, Concat, [1]] # 13 cat head P4

- [-1, 3, C2f, [512]] # 14 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]] # 15

- [[-1, 5], 1, Concat, [1]] # 16 cat head P5

- [-1, 3, C2f, [1024]] # 17 (P5/32-large)

- [[11, 14, 17], 1, Segment, [nc, 32, 256]] # Segment(P3, P4, P5)2.4 注册模块

关键步骤四:在task.py的parse_model函数替换为下面的内容

def parse_model(

d, ch, verbose=True, warehouse_manager=None

): # model_dict, input_channels(3)

"""Parse a YOLO model.yaml dictionary into a PyTorch model."""

import ast

# Args

max_channels = float("inf")

nc, act, scales = (d.get(x) for x in ("nc", "activation", "scales"))

depth, width, kpt_shape = (

d.get(x, 1.0) for x in ("depth_multiple", "width_multiple", "kpt_shape")

)

if scales:

scale = d.get("scale")

if not scale:

scale = tuple(scales.keys())[0]

LOGGER.warning(

f"WARNING ⚠️ no model scale passed. Assuming scale='{scale}'."

)

depth, width, max_channels = scales[scale]

if act:

Conv.default_act = eval(

act

) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

if verbose:

LOGGER.info(f"{colorstr('activation:')} {act}") # print

if verbose:

LOGGER.info(

f"\n{'':>3}{'from':>20}{'n':>3}{'params':>10} {'module':<45}{'arguments':<30}"

)

ch = [ch]

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

is_backbone = False

for i, (f, n, m, args) in enumerate(

d["backbone"] + d["head"]

): # from, number, module, args

try:

if m == "node_mode":

m = d[m]

if len(args) > 0:

if args[0] == "head_channel":

args[0] = int(d[args[0]])

t = m

m = getattr(torch.nn, m[3:]) if "nn." in m else globals()[m] # get module

except:

pass

for j, a in enumerate(args):

if isinstance(a, str):

with contextlib.suppress(ValueError):

try:

args[j] = locals()[a] if a in locals() else ast.literal_eval(a)

except:

args[j] = a

n = n_ = max(round(n * depth), 1) if n > 1 else n # depth gain

if m in (

Classify,

Conv,

ConvTranspose,

GhostConv,

Bottleneck,

GhostBottleneck,

SPP,

SPPF,

DWConv,

Focus,

BottleneckCSP,

C1,

C2,

C2f,

C3,

C3TR,

C3Ghost,

nn.Conv2d,

nn.ConvTranspose2d,

DWConvTranspose2d,

C3x,

RepC3,

):

if args[0] == "head_channel":

args[0] = d[args[0]]

c1, c2 = ch[f], args[0]

if (

c2 != nc

): # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

args = [c1, c2, *args[1:]]

if m in (

RepNCSPELAN4,

):

args[2] = make_divisible(min(args[2], max_channels) * width, 8)

args[3] = make_divisible(min(args[3], max_channels) * width, 8)

if m in (

BottleneckCSP,

C1,

C2,

C2f,

C3,

C3TR,

C3Ghost,

C3x,

RepC3,

):

args.insert(2, n) # number of repeats

n = 1

elif m is AIFI:

args = [ch[f], *args]

elif m in (HGStem, HGBlock):

c1, cm, c2 = ch[f], args[0], args[1]

if (

c2 != nc

): # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

cm = make_divisible(min(cm, max_channels) * width, 8)

args = [c1, cm, c2, *args[2:]]

if m in (HGBlock):

args.insert(4, n) # number of repeats

n = 1

elif m is ResNetLayer:

c2 = args[1] if args[3] else args[1] * 4

elif m is nn.BatchNorm2d:

args = [ch[f]]

elif m is Concat:

c2 = sum(ch[x] for x in f)

elif m in (

Detect,

Segment,

Pose,

OBB,

):

args.append([ch[x] for x in f])

if m in (

Segment,

):

args[2] = make_divisible(min(args[2], max_channels) * width, 8)

elif m is RTDETRDecoder: # special case, channels arg must be passed in index 1

args.insert(1, [ch[x] for x in f])

elif m is CBLinear:

c2 = make_divisible(min(args[0][-1], max_channels) * width, 8)

c1 = ch[f]

args = [

c1,

[make_divisible(min(c2_, max_channels) * width, 8) for c2_ in args[0]],

*args[1:],

]

elif m is CBFuse:

c2 = ch[f[-1]]

elif isinstance(m, str):

t = m

if len(args) == 2:

m = timm.create_model(

m,

pretrained=args[0],

pretrained_cfg_overlay={"file": args[1]},

features_only=True,

)

elif len(args) == 1:

m = timm.create_model(m, pretrained=args[0], features_only=True)

c2 = m.feature_info.channels()

elif m in {

vanillanet_5,

vanillanet_6,

vanillanet_7,

vanillanet_8,

vanillanet_9,

vanillanet_10,

vanillanet_11,

vanillanet_12,

vanillanet_13,

vanillanet_13_x1_5,

vanillanet_13_x1_5_ada_pool,

}:

m = m(*args)

c2 = m.channel

else:

c2 = ch[f]

if isinstance(c2, list):

is_backbone = True

m_ = m

m_.backbone = True

else:

m_ = (

nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args)

) # module

t = str(m)[8:-2].replace("__main__.", "") # module type

m.np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type = (

i + 4 if is_backbone else i,

f,

t,

) # attach index, 'from' index, type

if verbose:

LOGGER.info(

f"{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<45}{str(args):<30}"

) # print

save.extend(

x % (i + 4 if is_backbone else i)

for x in ([f] if isinstance(f, int) else f)

if x != -1

) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

for _ in range(5 - len(ch)):

ch.insert(0, 0)

else:

ch.append(c2)

return nn.Sequential(*layers), sorted(save)2.5 替换函数

关键步骤五:在task.py的BaseModel类下的_predict_once函数替换为下面的内容

def _predict_once(self, x, profile=False, visualize=False, embed=None):

"""

Perform a forward pass through the network.

Args:

x (torch.Tensor): The input tensor to the model.

profile (bool): Print the computation time of each layer if True, defaults to False.

visualize (bool): Save the feature maps of the model if True, defaults to False.

embed (list, optional): A list of feature vectors/embeddings to return.

Returns:

(torch.Tensor): The last output of the model.

"""

y, dt, embeddings = [], [], [] # outputs

for m in self.model:

if m.f != -1: # if not from previous layer

x = (y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f]) # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, "backbone"):

x = m(x)

for _ in range(5 - len(x)):

x.insert(0, None)

for i_idx, i in enumerate(x):

if i_idx in self.save:

y.append(i)

else:

y.append(None)

# for i in x:

# if i is not None:

# print(i.size())

x = x[-1]

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

if embed and m.i in embed:

embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flatten

if m.i == max(embed):

return torch.unbind(torch.cat(embeddings, 1), dim=0)

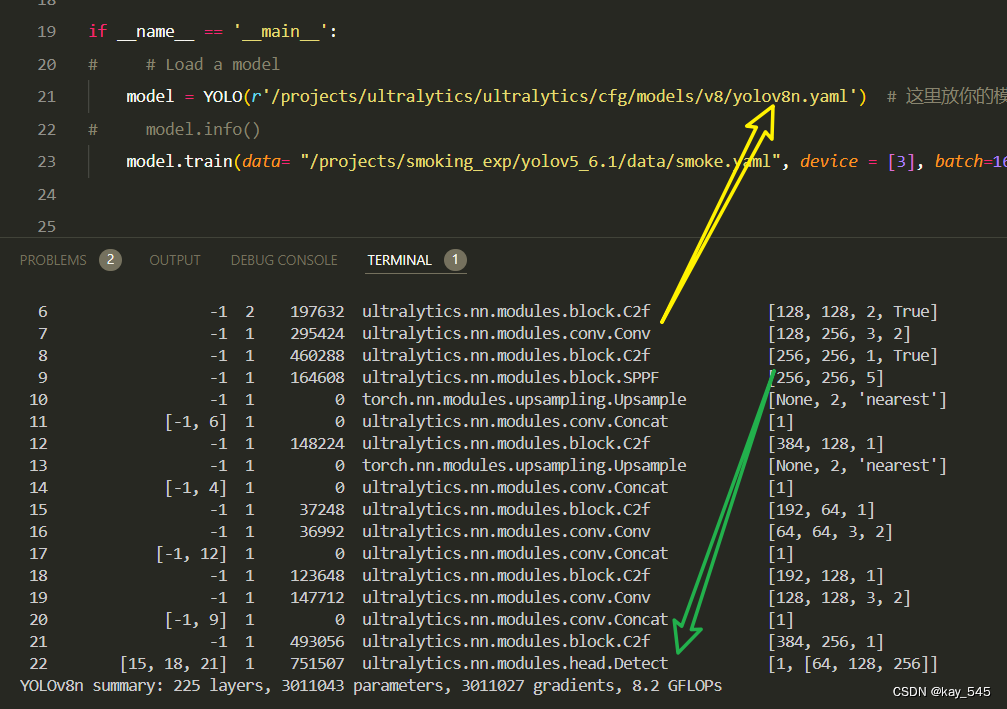

return x2.6 执行程序

在train.py中,将model的参数路径设置为yolov8_VanillaNet.yaml的路径

建议大家写绝对路径,确保一定能找到

from ultralytics import YOLO

# Load a model

# model = YOLO('yolov8n.yaml') # build a new model from YAML

# model = YOLO('yolov8n.pt') # load a pretrained model (recommended for training)

model = YOLO(r'/projects/ultralytics/ultralytics/cfg/models/v8/yolov8_VanillaNet.yaml') # build from YAML and transfer weights

# Train the model

model.train(batch=16)🚀运行程序,如果出现下面的内容则说明添加成功🚀

from n params module arguments

0 -1 1 318592 vanillanet_5 []

1 -1 1 394240 ultralytics.nn.modules.block.SPPF [512, 256, 5]

2 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

3 [-1, 3] 1 0 ultralytics.nn.modules.conv.Concat [1]

4 -1 1 164608 ultralytics.nn.modules.block.C2f [512, 128, 1]

5 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

6 [-1, 2] 1 0 ultralytics.nn.modules.conv.Concat [1]

7 -1 1 41344 ultralytics.nn.modules.block.C2f [256, 64, 1]

8 -1 1 36992 ultralytics.nn.modules.conv.Conv [64, 64, 3, 2]

9 [-1, 8] 1 0 ultralytics.nn.modules.conv.Concat [1]

10 -1 1 123648 ultralytics.nn.modules.block.C2f [192, 128, 1]

11 -1 1 147712 ultralytics.nn.modules.conv.Conv [128, 128, 3, 2]

12 [-1, 5] 1 0 ultralytics.nn.modules.conv.Concat [1]

13 -1 1 493056 ultralytics.nn.modules.block.C2f [384, 256, 1]

14 [11, 14, 17] 1 897664 ultralytics.nn.modules.head.Detect [80, [64, 128, 256]]

YOLOv8_vanillanet summary: 176 layers, 2617856 parameters, 2617840 gradients3. 完整代码分享

https://pan.baidu.com/s/1xBKM9rKjGsrVT2tZ1gm0mQ?pwd=jgdi提取码: jgdi

4. GFLOPs

关于GFLOPs的计算方式可以查看:百面算法工程师 | 卷积基础知识——Convolution

未改进的YOLOv8nGFLOPs

改进后的GFLOPs

现在手上没有卡了,等过段时候有卡了把这补上,需要的同学自己测一下

5. 进阶

可以与其他的注意力机制或者损失函数等结合,进一步提升检测效果

6.总结

VanillaNet 是一种极简主义神经网络架构,通过减少层数、简化操作以及避免复杂的连接方式(如自注意力和残差连接),实现高效的图像处理和分类。其主要原理包括:使用少量的卷积层来提取特征,采用分阶段的设计来逐步下采样特征图和增加通道数,每个阶段包含一个卷积层和一个池化层来简化计算;在训练过程中,通过深度训练策略将初始激活函数逐渐简化为恒等映射,以便合并卷积层和提高推理速度;最终,通过全连接层将高维特征映射到分类标签,从而实现简化结构下的高效分类。这种设计不仅保证了模型的性能,还优化了资源利用,使其适合在计算资源受限的环境中使用。