这篇文章会带给你

- 如何使用 LangChain:一套在大模型能力上封装的工具框架

- 如何用几行代码实现一个复杂的 AI 应用

- 面向大模型的流程开发的过程抽象

写在前面

官网:https://www.langchain.com/

LangChain 也是一套面向大模型的开发框架(SDK)

LangChain 是 AGI 时代软件工程的一个探索和原型

学习 LangChain 要关注接口变更

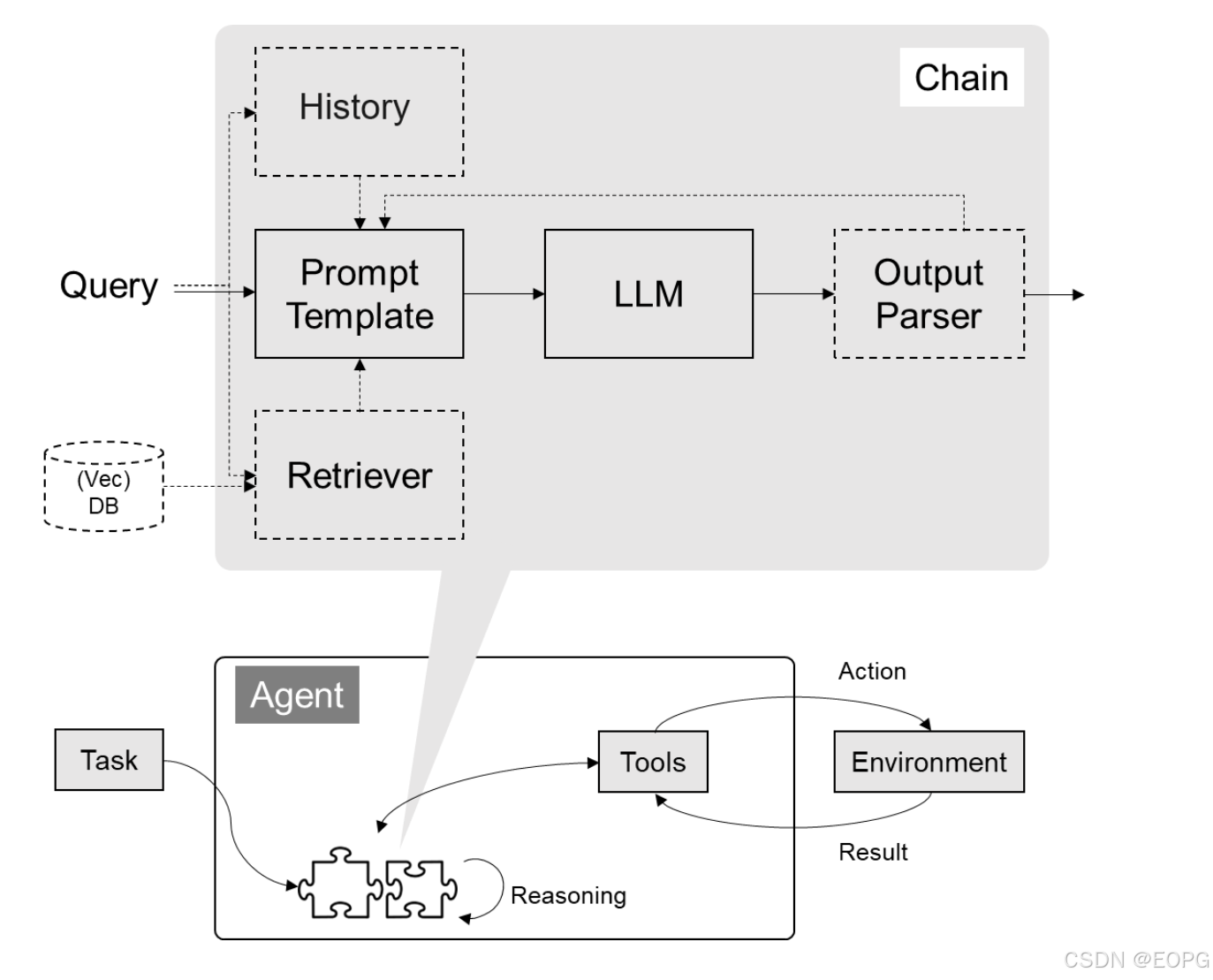

LangChain 的核心组件

- 模型 I/O 封装

- LLMs:大语言模型

- Chat Models:一般基于 LLMs,但按对话结构重新封装

- PromptTemple:提示词模板

- OutputParser:解析输出

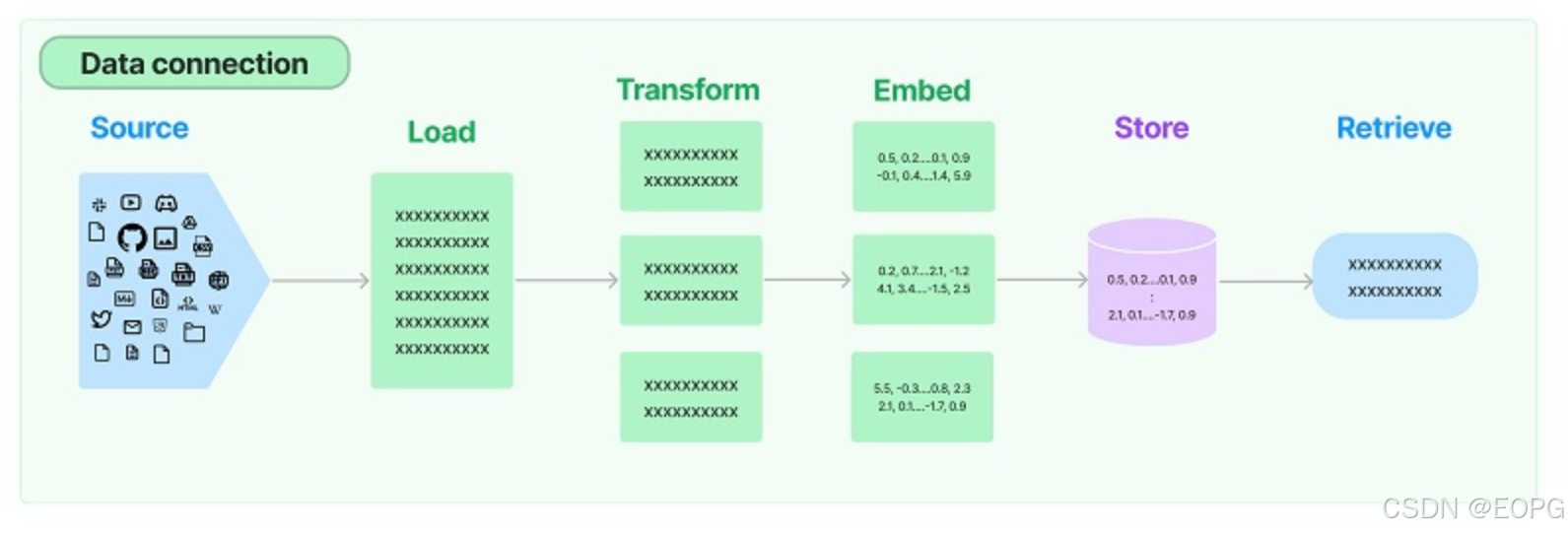

- 数据连接封装

- Document Loaders:各种格式文件的加载器

- Document Transformers:对文档的常用操作,如:split, filter, translate, extract metadata, etc

- Text Embedding Models:文本向量化表示,用于检索等操作(啥意思?别急,后面详细讲)

- Verctorstores: (面向检索的)向量的存储

- Retrievers: 向量的检索

- 对话历史管理

- 对话历史的存储、加载与剪裁

- 架构封装

- Chain:实现一个功能或者一系列顺序功能组合

- Agent:根据用户输入,自动规划执行步骤,自动选择每步需要的工具,最终完成用户指定的功能

- Tools:调用外部功能的函数,例如:调 google 搜索、文件 I/O、Linux Shell 等等

- Toolkits:操作某软件的一组工具集,例如:操作 DB、操作 Gmail 等等

- 回调

文档(以 Python 版为例)

- 功能模块:https://python.langchain.com/docs/get_started/introduction

- API 文档:https://api.python.langchain.com/en/latest/langchain_api_reference.html

- 三方组件集成:https://python.langchain.com/docs/integrations/platforms/

- 官方应用案例:https://python.langchain.com/docs/use_cases

- 调试部署等指导:https://python.langchain.com/docs/guides/debugging

划重点: 创建一个新的 conda 环境,langchain-learn,再开始下面的学习!

conda create -n langchain-learn python=3.10

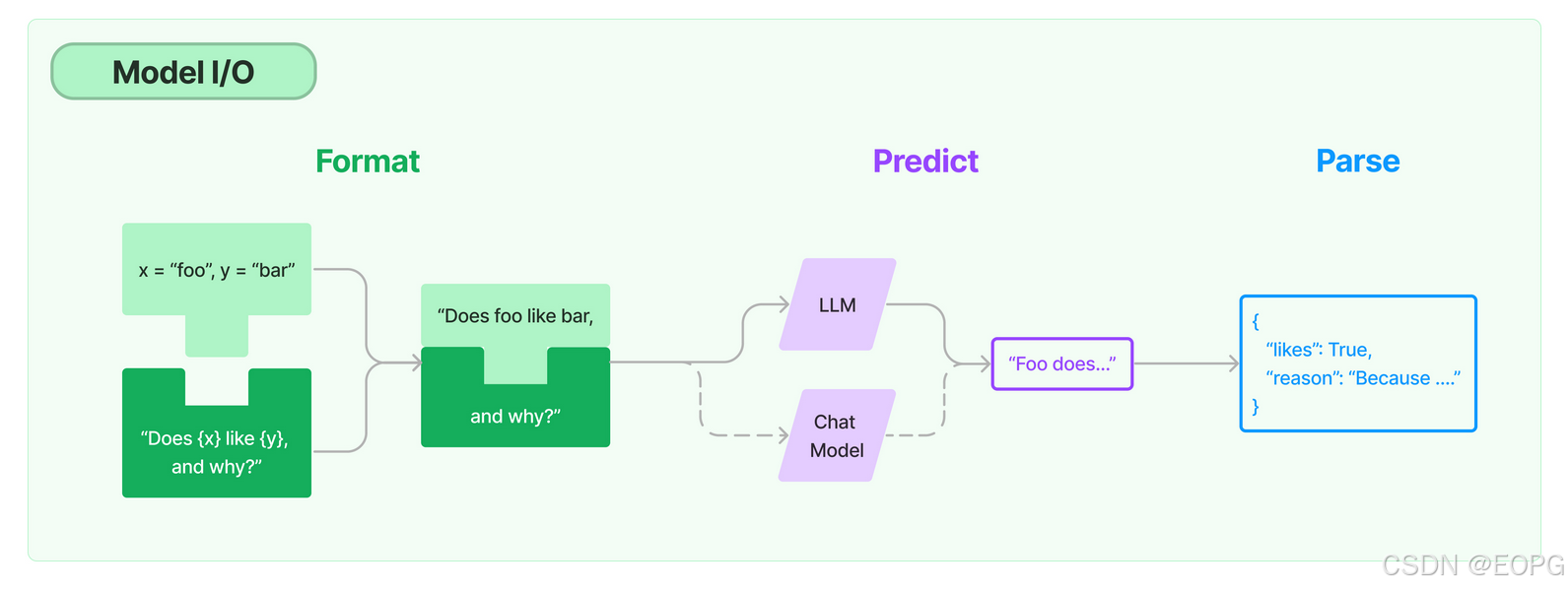

模型 I/O 封装

把不同的模型,统一封装成一个接口,方便更换模型而不用重构代码。

1.1 模型 API:LLM vs. ChatModel

pip install --upgrade langchain

pip install --upgrade langchain-openai

pip install --upgrade langchain-community

1.1.1 OpenAI 模型封装

from langchain_openai import ChatOpenAI

# 保证操作系统的环境变量里面配置好了OPENAI_API_KEY, OPENAI_BASE_URL

llm = ChatOpenAI(model="gpt-4o-mini") # 默认是gpt-3.5-turbo

response = llm.invoke("你是谁")

print(response.content)

我是一个人工智能助手,旨在回答问题和提供信息。如果你有任何问题或需要帮助的地方,随时可以问我!

1.1.2 多轮对话 Session 封装

from langchain.schema import (

AIMessage, # 等价于OpenAI接口中的assistant role

HumanMessage, # 等价于OpenAI接口中的user role

SystemMessage # 等价于OpenAI接口中的system role

)

messages = [

SystemMessage(content="你是聚客AI研究院的课程助理。"),

HumanMessage(content="我是学员,我叫大拿。"),

AIMessage(content="欢迎!"),

HumanMessage(content="我是谁")

]

ret = llm.invoke(messages)

print(ret.content)

你是大拿,一位学员。有什么我可以帮助你的吗?

划重点:通过模型封装,实现不同模型的统一接口调用

1.2 模型的输入与输出

1.2.1 Prompt 模板封装

- PromptTemplate 可以在模板中自定义变量

from langchain.prompts import PromptTemplate

template = PromptTemplate.from_template("给我讲个关于{subject}的笑话")

print("===Template===")

print(template)

print("===Prompt===")

print(template.format(subject='小明'))

=Template=

input_variables=[‘subject’] input_types={} partial_variables={} template=‘给我讲个关于{subject}的笑话’

=Prompt=

给我讲个关于小明的笑话

from langchain_openai import ChatOpenAI

# 定义 LLM

llm = ChatOpenAI(model="gpt-4o-mini")

# 通过 Prompt 调用 LLM

ret = llm.invoke(template.format(subject='小明'))

# 打印输出

print(ret.content)

小明有一天去参加一个学校的科学展览。他看到有个同学在展示一台可以自动写字的机器人。小明觉得很神奇,就问同学:“这个机器人怎么能写字的?”

同学得意地回答:“因为它有一个超级智能的程序!”

小明想了想,摇了摇头说:“那我也要给我的机器人装一个超级智能的程序!”

同学好奇地问:“你打算怎么做?”

小明认真地说:“我打算给它装上‘懒’这个程序,这样它就可以帮我写作业了!”

同学忍不住笑了:“你这是在找借口嘛!”

小明得意地耸耸肩:“反正我只要告诉老师,是机器人写的!”

- ChatPromptTemplate 用模板表示的对话上下文

from langchain.prompts import (

ChatPromptTemplate,

HumanMessagePromptTemplate,

SystemMessagePromptTemplate,

)

from langchain_openai import ChatOpenAI

template = ChatPromptTemplate.from_messages(

[

SystemMessagePromptTemplate.from_template("你是{product}的客服助手。你的名字叫{name}"),

HumanMessagePromptTemplate.from_template("{query}"),

]

)

llm = ChatOpenAI(model="gpt-4o-mini")

prompt = template.format_messages(

product="聚客AI研究院",

name="大吉",

query="你是谁"

)

print(prompt)

ret = llm.invoke(prompt)

print(ret.content)

[SystemMessage(content=‘你是聚客AI研究院的客服助手。你的名字叫大吉’, additional_kwargs={}, response_metadata={}), HumanMessage(content=‘你是谁’, additional_kwargs={}, response_metadata={})]

我是大吉,聚客AI研究院的客服助手。很高兴为您提供帮助!请问有什么我可以为您做的呢?

- MessagesPlaceholder 把多轮对话变成模板

from langchain.prompts import (

ChatPromptTemplate,

HumanMessagePromptTemplate,

MessagesPlaceholder,

)

human_prompt = "Translate your answer to {language}."

human_message_template = HumanMessagePromptTemplate.from_template(human_prompt)

chat_prompt = ChatPromptTemplate.from_messages(

# variable_name 是 message placeholder 在模板中的变量名

# 用于在赋值时使用

[MessagesPlaceholder("history"), human_message_template]

)

from langchain_core.messages import AIMessage, HumanMessage

human_message = HumanMessage(content="Who is Elon Musk?")

ai_message = AIMessage(

content="Elon Musk is a billionaire entrepreneur, inventor, and industrial designer"

)

messages = chat_prompt.format_prompt(

# 对 "history" 和 "language" 赋值

history=[human_message, ai_message], language="中文"

)

print(messages.to_messages())

[HumanMessage(content=‘Who is Elon Musk?’, additional_kwargs={}, response_metadata={}), AIMessage(content=‘Elon Musk is a billionaire entrepreneur, inventor, and industrial designer’, additional_kwargs={}, response_metadata={}), HumanMessage(content=‘Translate your answer to 中文.’, additional_kwargs={}, response_metadata={})]

result = llm.invoke(messages)

print(result.content)

埃隆·马斯克(Elon Musk)是一位亿万富翁企业家、发明家和工业设计师。

划重点:把Prompt模板看作带有参数的函数

1.2.2 从文件加载 Prompt 模板

from langchain.prompts import PromptTemplate

template = PromptTemplate.from_file("example_prompt_template.txt")

print("===Template===")

print(template)

print("===Prompt===")

print(template.format(topic='黑色幽默'))

=Template=

input_variables=[‘topic’] input_types={} partial_variables={} template=‘举一个关于{topic}的例子’

=Prompt=

举一个关于黑色幽默的例子

1.3 结构化输出

1.3.1 直接输出 Pydantic 对象

from pydantic import BaseModel, Field

# 定义你的输出对象

class Date(BaseModel):

year: int = Field(description="Year")

month: int = Field(description="Month")

day: int = Field(description="Day")

era: str = Field(description="BC or AD")

from langchain.prompts import PromptTemplate, ChatPromptTemplate, HumanMessagePromptTemplate

from langchain_openai import ChatOpenAI

from langchain_core.output_parsers import PydanticOutputParser

model_name = 'gpt-4o-mini'

temperature = 0

llm = ChatOpenAI(model_name=model_name, temperature=temperature)

# 定义结构化输出的模型

structured_llm = llm.with_structured_output(Date)

template = """提取用户输入中的日期。

用户输入:

{query}"""

prompt = PromptTemplate(

template=template,

)

query = "2024年十二月23日天气晴..."

input_prompt = prompt.format_prompt(query=query)

structured_llm.invoke(input_prompt)

Date(year=2024, month=12, day=23, era=‘AD’)

1.3.2 输出指定格式的 JSON

json_schema = {

"title": "Date",

"description": "Formated date expression",

"type": "object",

"properties": {

"year": {

"type": "integer",

"description": "year, YYYY",

},

"month": {

"type": "integer",

"description": "month, MM",

},

"day": {

"type": "integer",

"description": "day, DD",

},

"era": {

"type": "string",

"description": "BC or AD",

},

},

}

structured_llm = llm.with_structured_output(json_schema)

structured_llm.invoke(input_prompt)

{‘day’: 23, ‘month’: 12, ‘year’: 2024}

1.3.3 使用 OutputParser

OutputParser 可以按指定格式解析模型的输出

from langchain_core.output_parsers import JsonOutputParser

parser = JsonOutputParser(pydantic_object=Date)

prompt = PromptTemplate(

template="提取用户输入中的日期。\n用户输入:{query}\n{format_instructions}",

input_variables=["query"],

partial_variables={"format_instructions": parser.get_format_instructions()},

)

input_prompt = prompt.format_prompt(query=query)

output = llm.invoke(input_prompt)

print("原始输出:\n"+output.content)

print("\n解析后:")

parser.invoke(output)

原始输出:

{"year": 2024, "month": 12, "day": 23, "era": "AD"}

解析后:

{‘year’: 2024, ‘month’: 12, ‘day’: 23, ‘era’: ‘AD’}

也可以用 PydanticOutputParser

from langchain_core.output_parsers import PydanticOutputParser

parser = PydanticOutputParser(pydantic_object=Date)

input_prompt = prompt.format_prompt(query=query)

output = llm.invoke(input_prompt)

print("原始输出:\n"+output.content)

print("\n解析后:")

parser.invoke(output)

原始输出:

{

"year": 2024,

"month": 12,

"day": 23,

"era": "AD"

}

解析后:

Date(year=2024, month=12, day=23, era=‘AD’)

OutputFixingParser 利用大模型做格式自动纠错

from langchain.output_parsers import OutputFixingParser

new_parser = OutputFixingParser.from_llm(parser=parser, llm=ChatOpenAI(model="gpt-4o"))

bad_output = output.content.replace("4","四")

print("PydanticOutputParser:")

try:

parser.invoke(bad_output)

except Exception as e:

print(e)

print("OutputFixingParser:")

new_parser.invoke(bad_output)

PydanticOutputParser:

Invalid json output: ```json

{

“year”: 202四,

“month”: 12,

“day”: 23,

“era”: “AD”

}

For troubleshooting, visit: https://python.langchain.com/docs/troubleshooting/errors/OUTPUT_PARSING_FAILURE

OutputFixingParser:

Date(year=2024, month=12, day=23, era=‘AD’)

1.4 Function Calling

from langchain_core.tools import tool

@tool

def add(a: int, b: int) -> int:

"""Add two integers.

Args:

a: First integer

b: Second integer

"""

return a + b

@tool

def multiply(a: int, b: int) -> int:

"""Multiply two integers.

Args:

a: First integer

b: Second integer

"""

return a * b

import json

llm_with_tools = llm.bind_tools([add, multiply])

query = "3的4倍是多少?"

messages = [HumanMessage(query)]

output = llm_with_tools.invoke(messages)

print(json.dumps(output.tool_calls, indent=4))

[

{

“name”: “multiply”,

“args”: {

“a”: 3,

“b”: 4

},

“id”: “call_V6lxABmx12g6lIgT8WMUtexM”,

“type”: “tool_call”

}

]

回传 Funtion Call 的结果

messages.append(output)

available_tools = {"add": add, "multiply": multiply}

for tool_call in output.tool_calls:

selected_tool = available_tools[tool_call["name"].lower()]

tool_msg = selected_tool.invoke(tool_call)

messages.append(tool_msg)

new_output = llm_with_tools.invoke(messages)

for message in messages:

print(json.dumps(message.dict(), indent=4, ensure_ascii=False))

print(new_output.content)

{

“content”: “3的4倍是多少?”,

“additional_kwargs”: {},

“response_metadata”: {},

“type”: “human”,

“name”: null,

“id”: null,

“example”: false

}

{

“content”: “”,

“additional_kwargs”: {

“tool_calls”: [

{

“id”: “call_V6lxABmx12g6lIgT8WMUtexM”,

“function”: {

“arguments”: “{“a”:3,“b”:4}”,

“name”: “multiply”

},

“type”: “function”

}

],

“refusal”: null

},

“response_metadata”: {

“token_usage”: {

“completion_tokens”: 18,

“prompt_tokens”: 97,

“total_tokens”: 115,

“completion_tokens_details”: {

“accepted_prediction_tokens”: 0,

“audio_tokens”: 0,

“reasoning_tokens”: 0,

“rejected_prediction_tokens”: 0

},

“prompt_tokens_details”: {

“audio_tokens”: 0,

“cached_tokens”: 0

}

},

“model_name”: “gpt-4o-mini-2024-07-18”,

“system_fingerprint”: “fp_0aa8d3e20b”,

“finish_reason”: “tool_calls”,

“logprobs”: null

},

“type”: “ai”,

“name”: null,

“id”: “run-d25ca9ee-50b1-4848-a79e-42e58803fc7a-0”,

“example”: false,

“tool_calls”: [

{

“name”: “multiply”,

“args”: {

“a”: 3,

“b”: 4

},

“id”: “call_V6lxABmx12g6lIgT8WMUtexM”,

“type”: “tool_call”

}

],

“invalid_tool_calls”: [],

“usage_metadata”: {

“input_tokens”: 97,

“output_tokens”: 18,

“total_tokens”: 115,

“input_token_details”: {

“audio”: 0,

“cache_read”: 0

},

“output_token_details”: {

“audio”: 0,

“reasoning”: 0

}

}

}

{

“content”: “12”,

“additional_kwargs”: {},

“response_metadata”: {},

“type”: “tool”,

“name”: “multiply”,

“id”: null,

“tool_call_id”: “call_V6lxABmx12g6lIgT8WMUtexM”,

“artifact”: null,

“status”: “success”

}

3的4倍是12。

C:\Users\Administrator\AppData\Local\Temp\ipykernel_16608\2298449061.py:12: PydanticDeprecatedSince20: The

dictmethod is deprecated; usemodel_dumpinstead. Deprecated in Pydantic V2.0 to be removed in V3.0. See Pydantic V2 Migration Guide at https://errors.pydantic.dev/2.10/migration/

print(json.dumps(message.dict(), indent=4, ensure_ascii=False))

1.5、小结

- LangChain 统一封装了各种模型的调用接口,包括补全型和对话型两种

- LangChain 提供了 PromptTemplate 类,可以自定义带变量的模板

- LangChain 提供了一些列输出解析器,用于将大模型的输出解析成结构化对象

- LangChain 提供了 Function Calling 的封装

- 上述模型属于 LangChain 中较为实用的部分

二、数据连接封装

2.1 文档加载器:Document Loaders

pip install pymupdf

from langchain_community.document_loaders import PyMuPDFLoader

loader = PyMuPDFLoader("llama2.pdf")

pages = loader.load_and_split()

print(pages[0].page_content)

Llama 2: Open Foundation and Fine-Tuned Chat Models

Hugo Touvron∗

Louis Martin†

Kevin Stone†

Peter Albert Amjad Almahairi Yasmine Babaei Nikolay Bashlykov Soumya Batra

Prajjwal Bhargava Shruti Bhosale Dan Bikel Lukas Blecher Cristian Canton Ferrer Moya Chen

Guillem Cucurull David Esiobu Jude Fernandes Jeremy Fu Wenyin Fu Brian Fuller

Cynthia Gao Vedanuj Goswami Naman Goyal Anthony Hartshorn Saghar Hosseini Rui Hou

Hakan Inan Marcin Kardas Viktor Kerkez Madian Khabsa Isabel Kloumann Artem Korenev

Punit Singh Koura Marie-Anne Lachaux Thibaut Lavril Jenya Lee Diana Liskovich

Yinghai Lu Yuning Mao Xavier Martinet Todor Mihaylov Pushkar Mishra

Igor Molybog Yixin Nie Andrew Poulton Jeremy Reizenstein Rashi Rungta Kalyan Saladi

Alan Schelten Ruan Silva Eric Michael Smith Ranjan Subramanian Xiaoqing Ellen Tan Binh Tang

Ross Taylor Adina Williams Jian Xiang Kuan Puxin Xu Zheng Yan Iliyan Zarov Yuchen Zhang

Angela Fan Melanie Kambadur Sharan Narang Aurelien Rodriguez Robert Stojnic

Sergey Edunov

Thomas Scialom∗

GenAI, Meta

Abstract

In this work, we develop and release Llama 2, a collection of pretrained and fine-tuned

large language models (LLMs) ranging in scale from 7 billion to 70 billion parameters.

Our fine-tuned LLMs, called Llama 2-Chat, are optimized for dialogue use cases. Our

models outperform open-source chat models on most benchmarks we tested, and based on

our human evaluations for helpfulness and safety, may be a suitable substitute for closed-

source models. We provide a detailed description of our approach to fine-tuning and safety

improvements of Llama 2-Chat in order to enable the community to build on our work and

contribute to the responsible development of LLMs.

∗Equal contribution, corresponding authors: {tscialom, htouvron}@meta.com

†Second author

Contributions for all the authors can be found in Section A.1.

arXiv:2307.09288v2 [cs.CL] 19 Jul 2023

2.2 文档处理器

2.2.1 TextSplitter

pip install --upgrade langchain-text-splitters

from langchain_text_splitters import RecursiveCharacterTextSplitter

# 简单的文本内容切割

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=200,

chunk_overlap=100,

length_function=len,

add_start_index=True,

)

paragraphs = text_splitter.create_documents([pages[0].page_content])

for para in paragraphs:

print(para.page_content)

print('-------')

Llama 2: Open Foundation and Fine-Tuned Chat Models

Hugo Touvron∗

Louis Martin†

Kevin Stone†

Peter Albert Amjad Almahairi Yasmine Babaei Nikolay Bashlykov Soumya Batra

Kevin Stone†

Peter Albert Amjad Almahairi Yasmine Babaei Nikolay Bashlykov Soumya Batra

Prajjwal Bhargava Shruti Bhosale Dan Bikel Lukas Blecher Cristian Canton Ferrer Moya Chen

Prajjwal Bhargava Shruti Bhosale Dan Bikel Lukas Blecher Cristian Canton Ferrer Moya Chen

Guillem Cucurull David Esiobu Jude Fernandes Jeremy Fu Wenyin Fu Brian Fuller

Guillem Cucurull David Esiobu Jude Fernandes Jeremy Fu Wenyin Fu Brian Fuller

Cynthia Gao Vedanuj Goswami Naman Goyal Anthony Hartshorn Saghar Hosseini Rui Hou

Cynthia Gao Vedanuj Goswami Naman Goyal Anthony Hartshorn Saghar Hosseini Rui Hou

Hakan Inan Marcin Kardas Viktor Kerkez Madian Khabsa Isabel Kloumann Artem Korenev

Hakan Inan Marcin Kardas Viktor Kerkez Madian Khabsa Isabel Kloumann Artem Korenev

Punit Singh Koura Marie-Anne Lachaux Thibaut Lavril Jenya Lee Diana Liskovich

Punit Singh Koura Marie-Anne Lachaux Thibaut Lavril Jenya Lee Diana Liskovich

Yinghai Lu Yuning Mao Xavier Martinet Todor Mihaylov Pushkar Mishra

Yinghai Lu Yuning Mao Xavier Martinet Todor Mihaylov Pushkar Mishra

Igor Molybog Yixin Nie Andrew Poulton Jeremy Reizenstein Rashi Rungta Kalyan Saladi

Igor Molybog Yixin Nie Andrew Poulton Jeremy Reizenstein Rashi Rungta Kalyan Saladi

Alan Schelten Ruan Silva Eric Michael Smith Ranjan Subramanian Xiaoqing Ellen Tan Binh Tang

Alan Schelten Ruan Silva Eric Michael Smith Ranjan Subramanian Xiaoqing Ellen Tan Binh Tang

Ross Taylor Adina Williams Jian Xiang Kuan Puxin Xu Zheng Yan Iliyan Zarov Yuchen Zhang

Ross Taylor Adina Williams Jian Xiang Kuan Puxin Xu Zheng Yan Iliyan Zarov Yuchen Zhang

Angela Fan Melanie Kambadur Sharan Narang Aurelien Rodriguez Robert Stojnic

Sergey Edunov

Thomas Scialom∗

Sergey Edunov

Thomas Scialom∗

GenAI, Meta

Abstract

In this work, we develop and release Llama 2, a collection of pretrained and fine-tuned

Abstract

In this work, we develop and release Llama 2, a collection of pretrained and fine-tuned

large language models (LLMs) ranging in scale from 7 billion to 70 billion parameters.

large language models (LLMs) ranging in scale from 7 billion to 70 billion parameters.

Our fine-tuned LLMs, called Llama 2-Chat, are optimized for dialogue use cases. Our

Our fine-tuned LLMs, called Llama 2-Chat, are optimized for dialogue use cases. Our

models outperform open-source chat models on most benchmarks we tested, and based on

models outperform open-source chat models on most benchmarks we tested, and based on

our human evaluations for helpfulness and safety, may be a suitable substitute for closed-

our human evaluations for helpfulness and safety, may be a suitable substitute for closed-

source models. We provide a detailed description of our approach to fine-tuning and safety

source models. We provide a detailed description of our approach to fine-tuning and safety

improvements of Llama 2-Chat in order to enable the community to build on our work and

improvements of Llama 2-Chat in order to enable the community to build on our work and

contribute to the responsible development of LLMs.

contribute to the responsible development of LLMs.

∗Equal contribution, corresponding authors: {tscialom, htouvron}@meta.com

†Second author

∗Equal contribution, corresponding authors: {tscialom, htouvron}@meta.com

†Second author

Contributions for all the authors can be found in Section A.1.

arXiv:2307.09288v2 [cs.CL] 19 Jul 2023

类似 LlamaIndex,LangChain 也提供了丰富的 Document Loaders 和 Text Splitters。

2.3、向量数据库与向量检索

conda install -c pytorch faiss-cpu

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.vectorstores import FAISS

from langchain_openai import ChatOpenAI

from langchain_community.document_loaders import PyMuPDFLoader

# 加载文档

loader = PyMuPDFLoader("llama2.pdf")

pages = loader.load_and_split()

# 文档切分

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=300,

chunk_overlap=100,

length_function=len,

add_start_index=True,

)

texts = text_splitter.create_documents(

[page.page_content for page in pages[:4]]

)

# 灌库

embeddings = OpenAIEmbeddings(model="text-embedding-ada-002")

db = FAISS.from_documents(texts, embeddings)

# 检索 top-3 结果

retriever = db.as_retriever(search_kwargs={"k": 3})

docs = retriever.invoke("llama2有多少参数")

for doc in docs:

print(doc.page_content)

print("----")

but are not releasing.§

2. Llama 2-Chat, a fine-tuned version of Llama 2 that is optimized for dialogue use cases. We release

variants of this model with 7B, 13B, and 70B parameters as well.

We believe that the open release of LLMs, when done safely, will be a net benefit to society. Like all LLMs,

Llama 2-Chat, at scales up to 70B parameters. On the series of helpfulness and safety benchmarks we tested,

Llama 2-Chat models generally perform better than existing open-source models. They also appear to

large language models (LLMs) ranging in scale from 7 billion to 70 billion parameters.

Our fine-tuned LLMs, called Llama 2-Chat, are optimized for dialogue use cases. Our

models outperform open-source chat models on most benchmarks we tested, and based on

更多的三方检索组件链接,参考:https://python.langchain.com/v0.3/docs/integrations/vectorstores/

2.4、小结

- 文档处理部分,建议在实际应用中详细测试后使用

- 与向量数据库的链接部分本质是接口封装,向量数据库需要自己选型

三、对话历史管理

3.1、历史记录的剪裁

from langchain_core.messages import (

AIMessage,

HumanMessage,

SystemMessage,

trim_messages,

)

from langchain_openai import ChatOpenAI

messages = [

SystemMessage("you're a good assistant, you always respond with a joke."),

HumanMessage("i wonder why it's called langchain"),

AIMessage(

'Well, I guess they thought "WordRope" and "SentenceString" just didn\'t have the same ring to it!'

),

HumanMessage("and who is harrison chasing anyways"),

AIMessage(

"Hmmm let me think.\n\nWhy, he's probably chasing after the last cup of coffee in the office!"

),

HumanMessage("what do you call a speechless parrot"),

]

trim_messages(

messages,

max_tokens=45,

strategy="last",

token_counter=ChatOpenAI(model="gpt-4o-mini"),

)

[AIMessage(content=“Hmmm let me think.\n\nWhy, he’s probably chasing after the last cup of coffee in the office!”, additional_kwargs={}, response_metadata={}),

HumanMessage(content=‘what do you call a speechless parrot’, additional_kwargs={}, response_metadata={})]

#保留 system prompt

trim_messages(

messages,

max_tokens=45,

strategy=“last”,

token_counter=ChatOpenAI(model=“gpt-4o-mini”),

include_system=True,

allow_partial=True,

)

[SystemMessage(content=“you’re a good assistant, you always respond with a joke.”, additional_kwargs={}, response_metadata={}),

HumanMessage(content=‘what do you call a speechless parrot’, additional_kwargs={}, response_metadata={})]

3.2、过滤带标识的历史记录

from langchain_core.messages import (

AIMessage,

HumanMessage,

SystemMessage,

filter_messages,

)

messages = [

SystemMessage("you are a good assistant", id="1"),

HumanMessage("example input", id="2", name="example_user"),

AIMessage("example output", id="3", name="example_assistant"),

HumanMessage("real input", id="4", name="bob"),

AIMessage("real output", id="5", name="alice"),

]

filter_messages(messages, include_types="human")

[HumanMessage(content=‘example input’, additional_kwargs={}, response_metadata={}, name=‘example_user’, id=‘2’),

HumanMessage(content=‘real input’, additional_kwargs={}, response_metadata={}, name=‘bob’, id=‘4’)]

filter_messages(messages, exclude_names=["example_user", "example_assistant"])

[SystemMessage(content=‘you are a good assistant’, additional_kwargs={}, response_metadata={}, id=‘1’),

HumanMessage(content=‘real input’, additional_kwargs={}, response_metadata={}, name=‘bob’, id=‘4’),

AIMessage(content=‘real output’, additional_kwargs={}, response_metadata={}, name=‘alice’, id=‘5’)]

filter_messages(messages, include_types=[HumanMessage, AIMessage], exclude_ids=["3"])

[HumanMessage(content=‘example input’, additional_kwargs={}, response_metadata={}, name=‘example_user’, id=‘2’),

HumanMessage(content=‘real input’, additional_kwargs={}, response_metadata={}, name=‘bob’, id=‘4’),

AIMessage(content=‘real output’, additional_kwargs={}, response_metadata={}, name=‘alice’, id=‘5’)]

四、Chain 和 LangChain Expression Language (LCEL)

LangChain Expression Language(LCEL)是一种声明式语言,可轻松组合不同的调用顺序构成 Chain。LCEL 自创立之初就被设计为能够支持将原型投入生产环境,无需代码更改,从最简单的“提示+LLM”链到最复杂的链(已有用户成功在生产环境中运行包含数百个步骤的 LCEL Chain)。

LCEL 的一些亮点包括:

流支持:使用 LCEL 构建 Chain 时,你可以获得最佳的首个令牌时间(即从输出开始到首批输出生成的时间)。对于某些 Chain,这意味着可以直接从 LLM 流式传输令牌到流输出解析器,从而以与 LLM 提供商输出原始令牌相同的速率获得解析后的、增量的输出。

异步支持:任何使用 LCEL 构建的链条都可以通过同步 API(例如,在 Jupyter 笔记本中进行原型设计时)和异步 API(例如,在 LangServe 服务器中)调用。这使得相同的代码可用于原型设计和生产环境,具有出色的性能,并能够在同一服务器中处理多个并发请求。

优化的并行执行:当你的 LCEL 链条有可以并行执行的步骤时(例如,从多个检索器中获取文档),我们会自动执行,无论是在同步还是异步接口中,以实现最小的延迟。

重试和回退:为 LCEL 链的任何部分配置重试和回退。这是使链在规模上更可靠的绝佳方式。目前我们正在添加重试/回退的流媒体支持,因此你可以在不增加任何延迟成本的情况下获得增加的可靠性。

访问中间结果:对于更复杂的链条,访问在最终输出产生之前的中间步骤的结果通常非常有用。这可以用于让最终用户知道正在发生一些事情,甚至仅用于调试链条。你可以流式传输中间结果,并且在每个 LangServe 服务器上都可用。

输入和输出模式:输入和输出模式为每个 LCEL 链提供了从链的结构推断出的 Pydantic 和 JSONSchema 模式。这可以用于输入和输出的验证,是 LangServe 的一个组成部分。

无缝 LangSmith 跟踪集成:随着链条变得越来越复杂,理解每一步发生了什么变得越来越重要。通过 LCEL,所有步骤都自动记录到 LangSmith,以实现最大的可观察性和可调试性。

无缝 LangServe 部署集成:任何使用 LCEL 创建的链都可以轻松地使用 LangServe 进行部署。

原文:https://python.langchain.com/docs/expression_language/

4.1 Pipeline 式调用 PromptTemplate, LLM 和 OutputParser

from langchain_openai import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_core.runnables import RunnablePassthrough

from pydantic import BaseModel, Field, validator

from typing import List, Dict, Optional

from enum import Enum

import json

# 输出结构

class SortEnum(str, Enum):

data = 'data'

price = 'price'

class OrderingEnum(str, Enum):

ascend = 'ascend'

descend = 'descend'

class Semantics(BaseModel):

name: Optional[str] = Field(description="流量包名称", default=None)

price_lower: Optional[int] = Field(description="价格下限", default=None)

price_upper: Optional[int] = Field(description="价格上限", default=None)

data_lower: Optional[int] = Field(description="流量下限", default=None)

data_upper: Optional[int] = Field(description="流量上限", default=None)

sort_by: Optional[SortEnum] = Field(description="按价格或流量排序", default=None)

ordering: Optional[OrderingEnum] = Field(description="升序或降序排列", default=None)

# Prompt 模板

prompt = ChatPromptTemplate.from_messages(

[

("system", "你是一个语义解析器。你的任务是将用户的输入解析成JSON表示。不要回答用户的问题。"),

("human", "{text}"),

]

)

# 模型

llm = ChatOpenAI(model="gpt-4o", temperature=0)

structured_llm = llm.with_structured_output(Semantics)

# LCEL 表达式

runnable = (

{"text": RunnablePassthrough()} | prompt | structured_llm

)

# 直接运行

ret = runnable.invoke("不超过100元的流量大的套餐有哪些")

print(

json.dumps(

ret.dict(),

indent = 4,

ensure_ascii=False

)

)

{

“name”: null,

“price_lower”: null,

“price_upper”: 100,

“data_lower”: null,

“data_upper”: null,

“sort_by”: “data”,

“ordering”: “descend”

}

C:\Users\Administrator\AppData\Local\Temp\ipykernel_16608\4198727415.py:44: PydanticDeprecatedSince20: The

dictmethod is deprecated; usemodel_dumpinstead. Deprecated in Pydantic V2.0 to be removed in V3.0. See Pydantic V2 Migration Guide at https://errors.pydantic.dev/2.10/migration/

ret.dict(),

流式输出

prompt = PromptTemplate.from_template("讲个关于{topic}的笑话")

runnable = (

{"topic": RunnablePassthrough()} | prompt | llm | StrOutputParser()

)

# 流式输出

for s in runnable.stream("小明"):

print(s, end="", flush=True)

好的,那我就给你讲个关于小明的笑话吧!

一天,老师问小明:“如果地球是方的,那会怎么样?”

小明思考了一会儿,非常认真地回答:“那我小时候玩捉迷藏就不用被人发现了,因为我可以直接躲在地球的拐角处!”

老师差点笑到掉书!

注意: 在当前的文档中 LCEL 产生的对象,被叫做 runnable 或 chain,经常两种叫法混用。本质就是一个自定义调用流程。

使用 LCEL 的价值,也就是 LangChain 的核心价值。

官方从不同角度给出了举例说明:https://python.langchain.com/v0.1/docs/expression_language/why/

4.2 用 LCEL 实现 RAG

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.vectorstores import FAISS

from langchain_openai import ChatOpenAI

from langchain.chains import RetrievalQA

from langchain_community.document_loaders import PyMuPDFLoader

# 加载文档

loader = PyMuPDFLoader("llama2.pdf")

pages = loader.load_and_split()

# 文档切分

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=300,

chunk_overlap=100,

length_function=len,

add_start_index=True,

)

texts = text_splitter.create_documents(

[page.page_content for page in pages[:4]]

)

# 灌库

embeddings = OpenAIEmbeddings(model="text-embedding-ada-002")

db = FAISS.from_documents(texts, embeddings)

# 检索 top-2 结果

retriever = db.as_retriever(search_kwargs={"k": 2})

from langchain.schema.output_parser import StrOutputParser

from langchain.schema.runnable import RunnablePassthrough

# Prompt模板

template = """Answer the question based only on the following context:

{context}

Question: {question}

"""

prompt = ChatPromptTemplate.from_template(template)

# Chain

rag_chain = (

{"question": RunnablePassthrough(), "context": retriever}

| prompt

| llm

| StrOutputParser()

)

rag_chain.invoke("Llama 2有多少参数")

‘根据提供的上下文,Llama 2 有 7B(70亿)、13B(130亿)和 70B(700亿)参数的不同版本。’

4.3 用 LCEL 实现工厂模式

from langchain_core.runnables.utils import ConfigurableField

from langchain_openai import ChatOpenAI

from langchain_community.chat_models import QianfanChatEndpoint

from langchain.prompts import (

ChatPromptTemplate,

HumanMessagePromptTemplate,

)

from langchain.schema import HumanMessage

import os

# 模型1

ernie_model = QianfanChatEndpoint(

qianfan_ak=os.getenv('ERNIE_CLIENT_ID'),

qianfan_sk=os.getenv('ERNIE_CLIENT_SECRET')

)

# 模型2

gpt_model = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# 通过 configurable_alternatives 按指定字段选择模型

model = gpt_model.configurable_alternatives(

ConfigurableField(id="llm"),

default_key="gpt",

ernie=ernie_model,

# claude=claude_model,

)

# Prompt 模板

prompt = ChatPromptTemplate.from_messages(

[

HumanMessagePromptTemplate.from_template("{query}"),

]

)

# LCEL

chain = (

{"query": RunnablePassthrough()}

| prompt

| model

| StrOutputParser()

)

# 运行时指定模型 "gpt" or "ernie"

ret = chain.with_config(configurable={"llm": "gpt"}).invoke("请自我介绍")

print(ret)

思考:从模块间解依赖角度,LCEL的意义是什么?

4.4 存储与管理对话历史

from langchain_community.chat_message_histories import SQLChatMessageHistory

def get_session_history(session_id):

# 通过 session_id 区分对话历史,并存储在 sqlite 数据库中

return SQLChatMessageHistory(session_id, "sqlite:///memory.db")

from langchain_core.messages import HumanMessage

from langchain_core.runnables.history import RunnableWithMessageHistory

from langchain_openai import ChatOpenAI

from langchain.schema.output_parser import StrOutputParser

model = ChatOpenAI(model="gpt-4o-mini", temperature=0)

runnable = model | StrOutputParser()

runnable_with_history = RunnableWithMessageHistory(

runnable, # 指定 runnable

get_session_history, # 指定自定义的历史管理方法

)

runnable_with_history.invoke(

[HumanMessage(content="你好,我叫大拿")],

config={"configurable": {"session_id": "dana"}},

)

d:\envs\langchain-learn\lib\site-packages\langchain_core\runnables\history.py:608: LangChainDeprecationWarning:

connection_stringwas deprecated in LangChain 0.2.2 and will be removed in 1.0. Use connection instead.

message_history = self.get_session_history(

‘你好,大拿!很高兴再次见到你。有任何问题或想聊的话题吗?’

runnable_with_history.invoke(

[HumanMessage(content="你知道我叫什么名字")],

config={"configurable": {"session_id": "dana"}},

)

‘是的,你叫大拿。有什么我可以为你做的呢?’

runnable_with_history.invoke(

[HumanMessage(content="你知道我叫什么名字")],

config={"configurable": {"session_id": "test"}},

)

‘抱歉,我不知您的名字。如果您愿意,可以告诉我您的名字。’

通过 LCEL,还可以实现

- 配置运行时变量:https://python.langchain.com/v0.3/docs/how_to/configure/

- 故障回退:https://python.langchain.com/v0.3/docs/how_to/fallbacks

- 并行调用:https://python.langchain.com/v0.3/docs/how_to/parallel/

- 逻辑分支:https://python.langchain.com/v0.3/docs/how_to/routing/

- 动态创建 Chain: https://python.langchain.com/v0.3/docs/how_to/dynamic_chain/

更多例子:https://python.langchain.com/v0.3/docs/how_to/lcel_cheatsheet/

五、LangServe

LangServe 用于将 Chain 或者 Runnable 部署成一个 REST API 服务。

# 安装 LangServe

# pip install --upgrade "langserve[all]"

# 也可以只安装一端

# pip install "langserve[client]"

# pip install "langserve[server]"

5.1、Server 端

#!/usr/bin/env python

from fastapi import FastAPI

from langchain.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

from langserve import add_routes

import uvicorn

app = FastAPI(

title="LangChain Server",

version="1.0",

description="A simple api server using Langchain's Runnable interfaces",

)

model = ChatOpenAI(model="gpt-4o-mini")

prompt = ChatPromptTemplate.from_template("讲一个关于{topic}的笑话")

add_routes(

app,

prompt | model,

path="/joke",

)

if __name__ == "__main__":

uvicorn.run(app, host="localhost", port=9999)

5.2、Client 端

import requests

response = requests.post(

"http://localhost:9999/joke/invoke",

json={'input': {'topic': '小明'}}

)

print(response.json())

LangChain 与 LlamaIndex 的错位竞争

LangChain 侧重与 LLM 本身交互的封装

Prompt、LLM、Message、OutputParser 等工具丰富

在数据处理和 RAG 方面提供的工具相对粗糙

主打 LCEL 流程封装

配套 Agent、LangGraph 等智能体与工作流工具

另有 LangServe 部署工具和 LangSmith 监控调试工具

LlamaIndex 侧重与数据交互的封装

数据加载、切割、索引、检索、排序等相关工具丰富

Prompt、LLM 等底层封装相对单薄

配套实现 RAG 相关工具

有 Agent 相关工具,不突出

LlamaIndex 为 LangChain 提供了集成

在 LlamaIndex 中调用 LangChain 封装的 LLM 接口:https://docs.llamaindex.ai/en/stable/api_reference/llms/langchain/

将 LlamaIndex 的 Query Engine 作为 LangChain Agent 的工具:https://docs.llamaindex.ai/en/v0.10.17/community/integrations/using_with_langchain.html

LangChain 也 曾经 集成过 LlamaIndex,目前相关接口仍在:https://api.python.langchain.com/en/latest/retrievers/langchain_community.retrievers.llama_index.LlamaIndexRetriever.html

总结

LangChain 随着版本迭代可用性有明显提升

使用 LangChain 要注意维护自己的 Prompt,尽量 Prompt 与代码逻辑解依赖

它的内置基础工具,建议充分测试效果后再决定是否使用